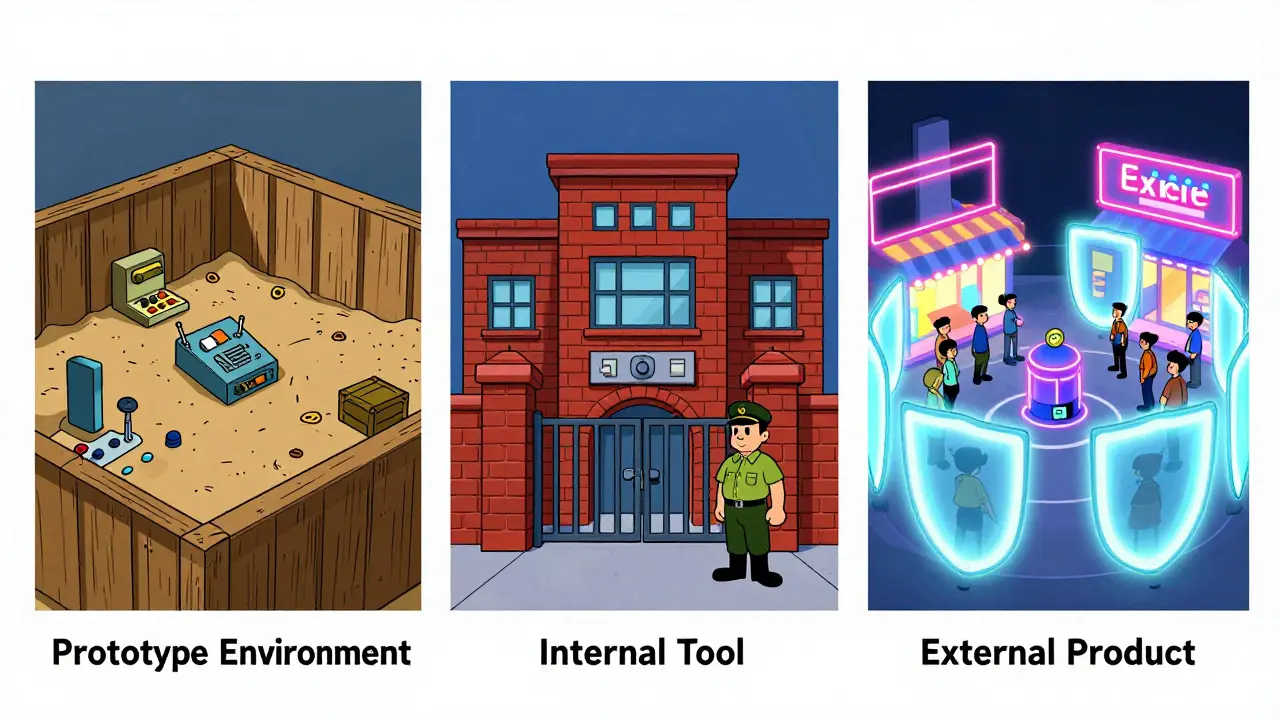

Risk-Based App Categories: Prototypes, Internal Tools, and External Products

Stop wasting budget on low-risk code. Learn how to classify software into prototypes, internal tools, and external products to optimize security efforts.