Here's something surprising about model compression: most teams pick their teacher model wrong from the start. They choose based on raw benchmark scores alone, ignoring whether that model's actual skills match their specific domain. When you're doing Large Language Model distillation, the right teacher sets the ceiling for everything your student can learn-and if you miss this step, no amount of training tuning will fix the fundamental mismatch.

The good news? Research has clarified exactly what makes a teacher model effective for particular tasks. Recent work presented at EMNLP 2022 showed domain-specific data selection dramatically changes outcomes, with students trained on targeted data outperforming those on mixed datasets by significant margins. Let's break down how to select teachers that actually deliver results.

Knowledge Distillation Basics: What Actually Happens

Knowledge distillation is a compression technique where a smaller student model learns to replicate a larger teacher's predictions and behavior patterns. Think of it like a master craftsman teaching an apprentice-the master doesn't just show answers, but demonstrates how to think through problems. The student model learns from the teacher's outputs and confidence distributions across different scenarios.

This works through loss functions that align the student's probability distribution with the teacher's predictions. Kullback-Leibler (KL) divergence is commonly used as the measurement tool, helping capture nuances in how the teacher weighs different classes. The student effectively inherits the teacher's reasoning patterns, not just final answers.

Why does this matter now? Teams deploy smaller models in resource-constrained environments-mobile devices, edge computing setups, low-latency applications. But deploying a small model trained from scratch gives mediocre results. Distillation lets you bring expert-level performance into constrained packages.

Why Your Teacher Choice Determines Everything

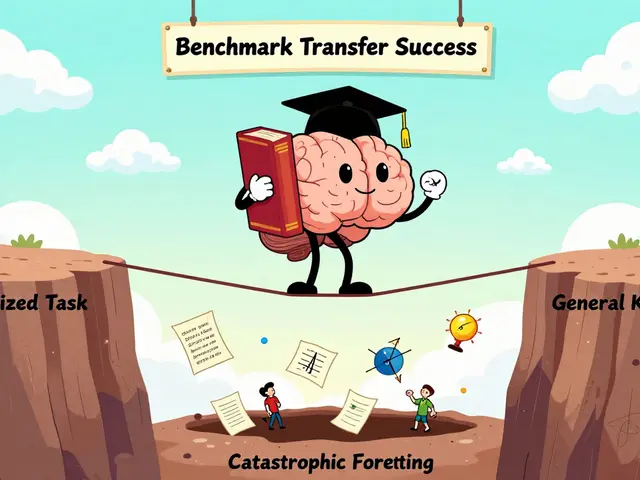

Technical guidance emphasizes one uncomfortable truth: the teacher establishes the accuracy ceiling for the student. You cannot extract capabilities that don't exist. A mediocre teacher means your best-performing student will still underperform, no matter how clever your training setup.

Recent theoretical advances uncovered something counterintuitive: a student model can sometimes match or exceed its teacher if its advantage on a Student-Favored Subdomain (SFS) outweighs deficits on the Teacher-Favored Subdomain (TFS). This matters because different subdomains require different teacher characteristics.

The implication? You need dynamic teacher selection that adapts as training progresses. Early-stage weak students need approximation ease-teachers whose knowledge transfers easily. Advanced students near convergence benefit more from peak teacher performance. Static selection misses these critical differences.

Essential Teacher Selection Criteria

You need to evaluate potential teachers against four core dimensions:

- Accuracy on target task: Does the teacher excel specifically at your domain task? Generic capability rankings rarely predict distillation success. A model ranking high on MMLU benchmarks might struggle with medical documentation or legal contracts.

- Generalization across distributions: Robust performance on varied data signals reliable knowledge transfer. Well-calibrated confidence scores reflect true prediction reliability rather than overconfident noise.

- Architectural complexity gap: The teacher should demonstrate meaningful size or capacity advantage over the student to justify the distillation process itself. Similar-sized models rarely provide sufficient knowledge differential.

- Output format alignment: Your teacher's output structure must fit your distillation setup. Incompatible formats require preprocessing overhead that defeats efficiency gains.

Misaligned teacher-student pairs often show disappointing performance despite strong individual components.

| Factor | Early Training Phase Importance | Late Training Phase Importance |

|---|---|---|

| Approximation Ease | Critical | Low |

| Peak Performance | Medium | High |

| Domain Specificity | High | Very High |

| Confidence Calibration | Medium | High |

This table shows why static selection fails-you need to weigh factors differently throughout training cycles. Ignoring these temporal dynamics wastes valuable compute resources.

Timing Strategy: When Teachers Matter Most

Scheduled Checkpoint Distillation introduced a framework recognizing that optimal teacher selection evolves during training. Early phases prioritize selecting teachers where knowledge transfers easily to untrained students. As the student strengthens and approaches teacher capability, peak performance becomes the dominant metric.

Experimental results across multilingual tasks demonstrated consistent improvement using this adaptive approach. Students distilled with properly timed teacher selection matched or exceeded fine-tuned teacher performance across multiple tasks.

Consider two common mistakes:

- Selecting too early: Choosing based on pretraining performance before seeing domain-specific fine-tuning results often misses crucial domain expertise.

- Committing too late: Waiting until after student convergence locks in poor early choices that later corrections cannot fully overcome.

The practical solution involves staged evaluation-assess candidates during initial training, then reassess once the student shows measurable progress before finalizing commitments.

Domain Adaptation: Data Quality Trumps Generic Scale

Amazon Science research at EMNLP 2022 clarified a critical finding: distilling over target domain data provides substantially better performance than relying solely on teacher knowledge. Three data ratio configurations were tested:

- Generic-only baseline (poor results)

- Mixed 7:3 generic-to-task-specific ratio (moderate improvement)

- Task-specific-only approaches (best outcomes)

Even mixed data helped significantly compared to training from scratch, but task-specific-only distillation produced superior students.

More importantly, timing matters: pre-adapting the teacher to the target domain before distillation produces the best students compared to adapting only the student afterward. This upfront investment compounds returns across the entire distillation process.

Different domains require different teacher characteristics. Medical text needs domain terminology precision. Legal documents require consistency in formal language patterns. Customer service applications prioritize conversational fluency over technical accuracy. Match the teacher's specialty to your specific domain needs.

Emerging Collaborative Approaches

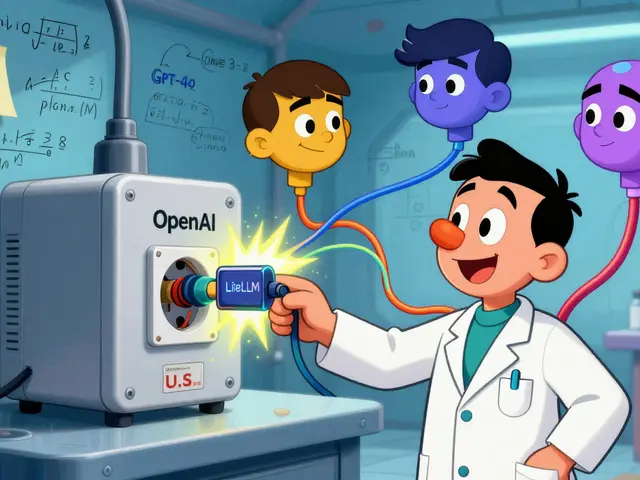

Multi-teacher frameworks represent the frontier of distillation research. Instead of single teachers, these approaches employ different experts for different features. Hugging Face documentation notes several strategies:

- Average predictions across multiple teachers

- Random teacher selection per instance

- Feature-specific teacher assignment

Multi-teacher Collaborative Distillation frameworks published in July 2025 show promising results for complex multi-domain tasks. Vocabulary-agnostic approaches from March 2025 handle cross-lingual scenarios effectively.

Curriculum-driven instruction tuning methods like CITING from Feng et al. (2023) structure learning progression systematically. Teacher-driven reinforcement learning agent distillation extends these principles to autonomous systems. The field is actively evolving with weekly publications introducing refined methodologies.

CanDist framework introduces a Distribution Refinery mechanism dynamically adjusting training targets based on student predictions. This addresses cases where students develop partial understanding that requires different teacher scaffolding.

Snorkel AI emphasizes incorporating weak supervision and labeling functions accelerating deployment in specialized domains. Their approach treats distillation as part of broader production pipeline optimization rather than isolated model compression.

Practical Implementation Framework

Ready to implement? Start with these concrete steps:

- Audit candidate teachers: Run domain-specific evaluations on each potential teacher using your actual task data, not generic benchmarks.

- Measure calibration quality: Verify confidence scores correlate with actual accuracy through calibration curves.

- Test approximation ease: Run preliminary distillation with minimal training to identify which teachers transfer knowledge most effectively.

- Select primary teacher: Choose based on combined metrics weighted for your training stage (early = approximation ease, late = peak performance).

- Prepare domain-adapted data: Prioritize task-specific training examples over generic corpora during distillation.

- Implement adaptive weighting: Use sample-wise mechanisms to preserve student strengths on favorable subdomains while addressing weaknesses.

Track these specific metrics throughout: student accuracy trajectory, teacher-student confidence alignment, and domain-specific task performance. Expect iteration-rarely does perfect matching occur on first attempt.

Frequently Asked Questions

Can student models ever outperform their teachers?

Yes, under specific conditions. When students have advantages on Student-Favored Subdomains that outweigh Teacher-Favored Subdomain deficits, they can match or exceed teacher performance. Scheduled Checkpoint Distillation achieves this by systematically reducing TFS deficit through emulating teacher convergence during supervised fine-tuning. The key is having well-aligned strengths rather than overall superiority.

How do I know if a teacher is suitable for my domain?

Evaluate teachers on your actual task data, not generic benchmarks. Check for: 1) Strong accuracy on domain-specific examples, 2) Well-calibrated confidence scores reflecting true reliability, 3) Good generalization across your data distribution variations. Test preliminary distillation with minimal training to verify knowledge transfer ease before committing to full implementation.

When should I adapt the teacher versus adapting only the student?

Pre-adapting the teacher to the target domain before distillation consistently produces better students according to Amazon Science research. This upfront investment creates stronger knowledge foundations. Adapting only the student afterward is cheaper but yields inferior results because the student lacks domain-grounded guidance during critical early learning phases.

What's the difference between generic and domain-specific distillation data?

Generic data covers broad topics with diverse examples. Domain-specific data focuses intensively on your particular task requirements. EMNLP 2022 research showed task-specific-only approaches substantially outperformed mixed 7:3 generic-to-task ratios and generic-only baselines. Students significantly outperformed similar-sized models trained from scratch even with mixed data, but domain-specific yielded best results.

Should I use one teacher or multiple teachers for distillation?

Multi-teacher approaches offer benefits for complex multi-domain tasks. Options include averaging predictions across teachers, random selection per instance, or feature-specific assignments. However, single high-quality domain-matched teachers often suffice for focused applications. Multi-teacher frameworks add complexity; start simple unless you need specialized capabilities from different teacher specialties.

How much compute time does teacher pre-adaptation require?

Pre-adaptation costs vary by domain and teacher size but typically requires fine-tuning the teacher on 1,000-10,000 task-specific examples depending on specialization level. While this adds upfront compute overhead, the improved student quality typically reduces downstream inference costs substantially. For production deployments handling thousands of daily requests, the initial investment pays back quickly through better model performance.

What metrics should I track during distillation?

Monitor three key metrics: student accuracy trajectory over training epochs, teacher-student confidence alignment measured via KL divergence, and domain-specific task performance on held-out test sets. Additionally track approximation ease early in training and peak performance proximity in later stages. These indicators reveal whether your teacher selection strategy is working or needs adjustment.

Most teams ignore the real bottleneck which isn't the model architecture at all. They focus too much on KL divergence instead of data hygiene upstream. If your training set is garbage the student will hallucinate regardless of the teacher used. Benchmark scores are vanity metrics anyway. Stop optimizing for metrics that don't matter for deployment latency. Real world inference costs kill most projects before accuracy does.

Its really cool to see people finally talking about domain adaptation in distillation processes. I remember trying to train a medical bot using general models last year and failing hard. Matching the teacher skills to the specific subdomain makes such a huge difference practically. Its good you mentioned the timing aspect of training phases too. Early stage students need easier signals to latch onto while later stages need peak precision. We often skip that dynamic adjustment part completely. Hope more teams read this guide before they waste compute credits.

The distinction between Student-Favored Subdomain and Teacher-Favored Subdomain is poorly articulated here. It lacks the rigorous definition required for actual implementation without ambiguity. Your statement regarding approximation ease requires further empirical validation to be accepted. Many practitioners ignore the calibration curves entirely due to computational overhead. This creates a false sense of security during the initial rollout phase. Confidence intervals must be narrow enough to justify automated decision making. Otherwise you are just guessing with confidence. Please provide the source code for the scheduled checkpoint mechanism described.

Your assertion regarding empirical validation is incorrect and misleading to novices. We have sufficient literature supporting the temporal dynamics of teacher utility without needing new experiments. The terminology used throughout the article is precise enough for engineering applications. You seem to misunderstand the fundamental mechanics of probability distribution alignment. Confidence scores are indeed noisy if calibration is neglected but that is a known issue. Ignoring this reality leads to catastrophic failure rates in production environments. We must maintain strict standards for documentation and claim substantiation. Your criticism suggests a lack of familiarity with the field. Please refrain from gatekeeping basic methodologies to junior engineers. The community benefits from shared knowledge not constant skepticism without evidence.

Wow this is exactly what i have been looking for lately in terms of optimization techniques

I love how the post breaks down the teacher selection criteria step by step

We always rush into picking the biggest model thinking it helps the most but actually size matters less than alignment

The part about early vs late training phase adjustments is super interesting

I never thought about changing the teacher strategy as the student gets smarter

It makes total sense that weak students need simpler knowledge transfer first

Then they graduate to harder concepts once they build the foundation properly

Using mixed datasets definitely hurts performance compared to targeted data alone

I tried that once and the results were surprisingly poor despite high loss numbers

Multi teacher frameworks sound like the next big thing for complex tasks

Imagine having one teacher for syntax and another for reasoning capabilities

That would cover so many blind spots we face with single model setups

People really need to stop chasing generic benchmarks like MMLU blindly

Domain specific metrics should drive the selection process entirely

Cant wait to test these adaptive weighting strategies in my own stack

Thanks for sharing this deep dive on distillation logic

This saves us from wasting weeks on bad teacher choices for sure

Honestly the timeline for convergence improves massively with the right partner

Just gotta make sure the output formats align perfectly beforehand

Otherwise the preprocessing overhead kills all the gains you made earlier

Sure pick the perfect teacher and magically everything works like magic.

The investment required for pre-adaptation does pay off over time according to recent studies. Small teams might find it daunting initially but the long term savings are undeniable. It is possible to start small with task specific examples before scaling up complexity. Many groups overlook the compounding returns of quality data curation during setup. Patience yields better results than rushing through the fine-tuning stages. Consistent evaluation keeps the project aligned with business goals effectively. Keep experimenting even if early metrics look flat or confusing. Persistence is key when building robust student models for production use.

Big Tech companies are definitely hiding the real reason their models fail in the wild. They push generic benchmarks because domain specific testing exposes their weaknesses in niche areas. Amazon Science research was probably funded to sell cloud compute usage rather than improve AI safety. Everyone talks about distillation efficiency but nobody mentions the carbon footprint of pre-adapting teachers. The narrative focuses on model compression while ignoring the energy costs involved. Data selection is just a cover for filtering out sensitive information from training sets. We should question why certain domains get better results with proprietary methods. Transparency in distillation pipelines is clearly lacking across all major providers.

hey i see your point bout data hygiene being importnt

but sometimes the model architechture just isnt enough to save a bad pipeline

i think we shoudl try both approaches before giving up

the kullback-leibler divigence stuff is tricky to debug tho

glad u posted thos thoughts on the benchmars though

really helps to keep things grounded in practical reality

just dont forget to check ur gradients while doing alll this

hope your team gets a win soon with theri new model

keep grinding hard out there everyone

we all learn from mistakes in this field together

They want you to believe the math fixes everything when really the data is rigged

You see how they talk about benchmarks now

Its all a control tactic to keep us buying GPUs and tokens

My friend tried this method last week and his server crashed hard

Something is wrong with the trust in these public teacher models

Check the logs yourself and tell me what you find then

Its getting worse everyday with all the new releases coming out

Dont trust the automated systems telling you what to do

We need to wake up to the truth about machine learning efficiency

This industry hides the real failures behind fancy words and tables