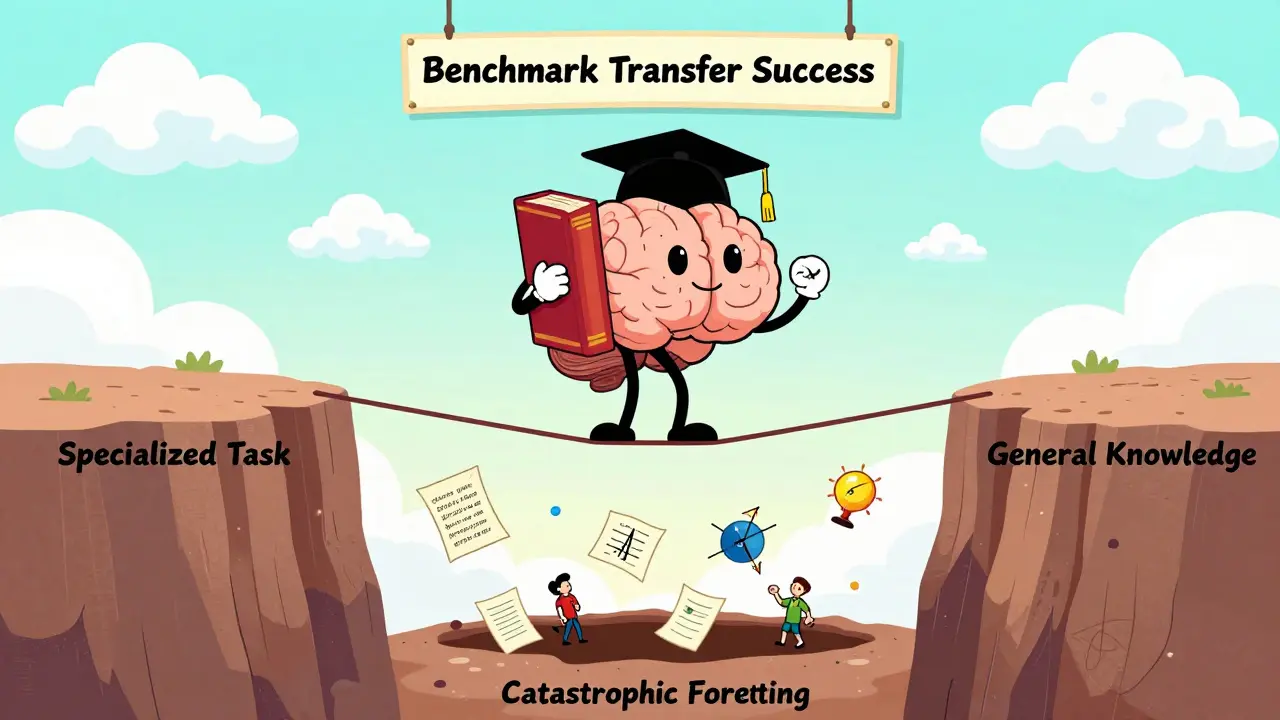

When you fine-tune a large language model like GPT-4 or Llama 3 to write legal briefs or answer medical questions, you expect it to get better at that one job. But what happens to everything else it knows? Does it forget how to write a poem, explain quantum physics, or chat like a human? This is the real test of fine-tuning: benchmark transfer.

Most people think fine-tuning is just about making a model smarter at a specific task. It’s not. It’s about making it smarter without breaking what it already does well. That’s the tightrope walk every engineer faces when they train a model on a new dataset. And if the model loses its general knowledge along the way, you’ve got a dangerous tool - one that’s good at one thing and clueless at everything else.

Why Benchmark Transfer Matters More Than You Think

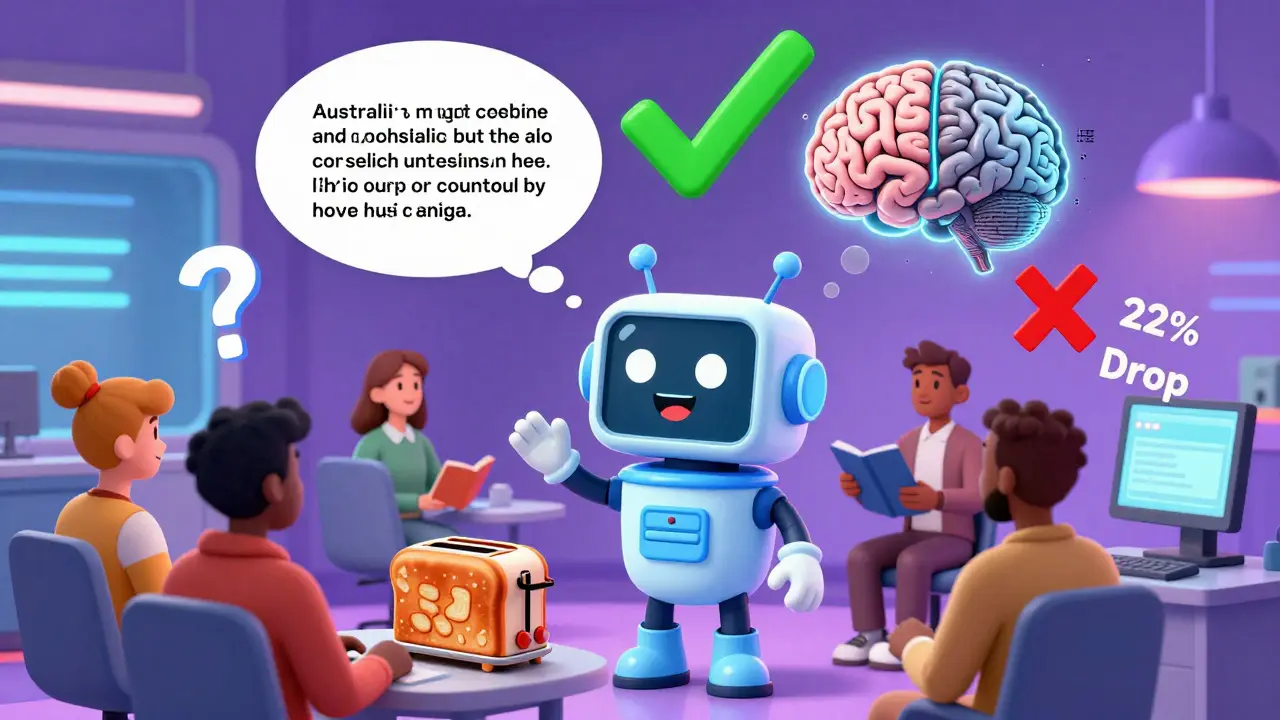

Imagine you’re building a customer support bot for an e-commerce company. You fine-tune it on 10,000 past chat logs. It becomes amazing at refund requests. But then, a user asks: “What’s the capital of Australia?” and the bot says, “I don’t know.” That’s not a bug. That’s catastrophic forgetting - a well-documented failure where a model loses access to knowledge it had before fine-tuning.

Real-world models don’t live in isolation. They’re used across multiple interfaces: chat, email, APIs, voice assistants. If a model can’t handle a simple factual question after being trained on a specialized task, it’s unreliable. That’s why benchmark transfer isn’t just an academic concern - it’s a deployment risk.

Studies from AI labs like Anthropic and Stanford show that models fine-tuned without safeguards can lose up to 30% of their performance on general language tasks like those in the MMLU (Multi-choice Multi-disciplinary Language Understanding) benchmark. That’s not a small drop. It’s the difference between a tool you can trust and one that needs constant human oversight.

How Fine-Tuning Works (Without the Jargon)

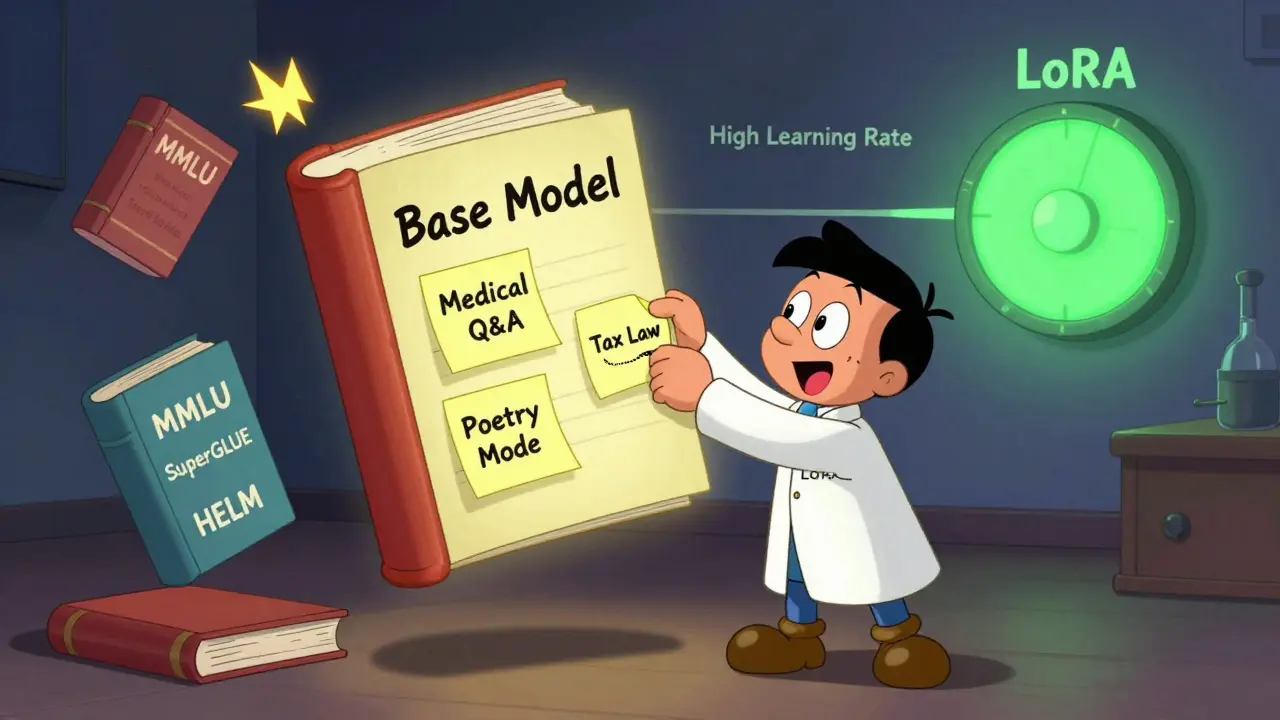

Think of a pre-trained LLM as a student who’s read every book in the library. They’ve learned grammar, logic, history, science - everything. Fine-tuning is like giving them a crash course in one subject: say, tax law.

You don’t re-teach them everything. You give them a few textbooks (labeled examples), let them practice, and adjust their internal settings just enough to get better at tax questions. The goal? Keep their broad knowledge intact while sharpening their tax skills.

But here’s the problem: if you give them too many practice tests, or if you push them too hard with high learning rates, they start overwriting their old knowledge. Their brain starts treating tax law as the only thing that matters. That’s when transfer breaks.

The Hidden Techniques That Preserve General Knowledge

There are smart ways to avoid this. Three methods dominate real-world use today.

- LoRA (Low-Rank Adaptation): Instead of changing all the model’s weights, LoRA adds tiny, trainable layers on top - like sticky notes on a textbook. Only 0.1% of parameters are updated. The original knowledge stays untouched.

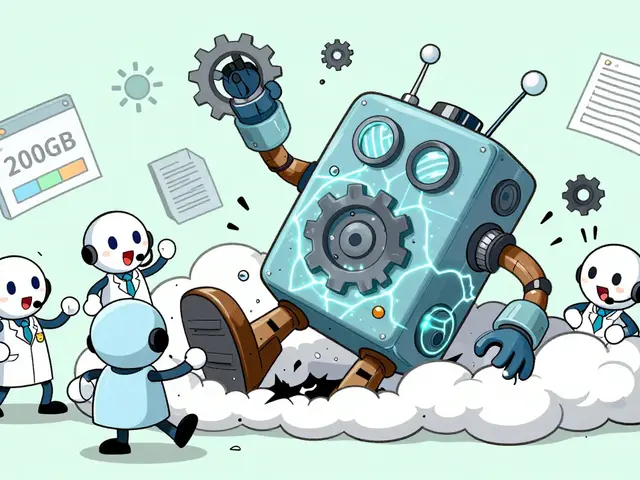

- QLoRA: This is LoRA with memory compression. It runs fine-tuning on models that are 4-bit quantized - meaning they use way less RAM. You can fine-tune a 70-billion-parameter model on a single GPU. And because the base model stays frozen, its general abilities stay intact.

- Adapter Fusion: Instead of one fine-tuned version, you train multiple specialized adapters (like different skills) and blend them on the fly. Need to answer legal questions? Use the legal adapter. Need to write poetry? Switch to the creative one. The base model never changes.

These aren’t theoretical. Companies like Hugging Face and Mistral AI use LoRA and QLoRA in production. One startup in Portland reduced their fine-tuning costs by 90% using QLoRA and saw zero drop in performance on general benchmarks like SuperGLUE.

How to Test for Benchmark Transfer

You can’t just assume your model still works. You have to test it.

Here’s a simple checklist every team should follow after fine-tuning:

- Run the model on a general benchmark like MMLU or HELM (Holistic Evaluation of Language Models). These include questions on history, ethics, math, and science.

- Test on domain-specific tasks you fine-tuned for. Compare performance before and after.

- Ask it open-ended questions it shouldn’t know: “Explain how a blockchain works using a metaphor about baking bread.”

- Try edge cases: “What’s the opposite of silence?” or “Write a haiku about a toaster.”

If the model scores below 85% of its pre-fine-tuned performance on general tasks, you’ve got a problem. Either reduce training epochs, lower the learning rate, or switch to LoRA.

What Happens When You Ignore Benchmark Transfer

There are real consequences.

A healthcare startup in 2025 fine-tuned a model on patient intake forms. It got 98% accurate at extracting symptoms. But when patients asked, “Is this medication safe during pregnancy?” the model gave a wrong answer - because it had forgotten basic medical knowledge. The result? A lawsuit, a public apology, and a shutdown.

Another company used fine-tuned models for news summarization. After training, the model started hallucinating dates and names in headlines. Why? Because it was overfitting to one news source and lost its ability to generalize across publications.

These aren’t edge cases. They’re predictable. And they’re avoidable.

Tools That Make Benchmark Transfer Easier

You don’t need to build this from scratch. Here are the most reliable tools used by practitioners in 2026:

- Hugging Face Transformers: The most widely used library. Has built-in LoRA support and easy benchmark integration.

- Axolotl: A fine-tuning toolkit designed for non-experts. Automatically runs transfer tests after training.

- trl (Transformers Reinforcement Learning): Lets you combine fine-tuning with reward modeling to keep outputs both accurate and general.

- DeepSpeed: Optimizes memory use during training - critical for running QLoRA on large models.

One engineer in Austin told me they used Axolotl to fine-tune a model on customer feedback, then ran a single command to auto-test transfer. It flagged a 22% drop in MMLU scores. They adjusted the learning rate from 2e-5 to 5e-6 - and performance bounced back.

The Future: More Than Just Accuracy

The next frontier isn’t just about keeping general knowledge. It’s about measuring fairness after fine-tuning.

Models fine-tuned on biased data (like customer service logs from one region) can become worse at handling diverse voices. New benchmarks like FAIR-LLM and DEBIAS-TEXT now measure not just accuracy, but whether a model treats all users equally after training.

For example, a model fine-tuned on U.S. legal documents might perform poorly on Canadian law queries - not because it forgot, but because it never learned them. Benchmark transfer now includes cross-cultural and cross-lingual tests.

By 2026, top AI teams are running weekly transfer audits. They don’t just ask: “Is it accurate?” They ask: “Is it still human?”

What is benchmark transfer in LLM fine-tuning?

Benchmark transfer is the ability of a large language model to maintain its general language understanding after being fine-tuned for a specific task. For example, if a model learns to answer medical questions, it should still be able to explain history, write poetry, or answer geography questions. If it can’t, it’s suffered catastrophic forgetting.

Does fine-tuning always hurt a model’s general knowledge?

No. Fine-tuning doesn’t automatically hurt general knowledge - but it often does if done poorly. Using high learning rates, long training times, or full-parameter updates increases the risk. Methods like LoRA, QLoRA, and adapter fusion are designed to avoid this by only adjusting a tiny fraction of the model’s weights.

How do I know if my fine-tuned model still works for general tasks?

Test it on standard benchmarks like MMLU, HELM, or SuperGLUE. Compare its score before and after fine-tuning. If it drops more than 10-15%, you’ve lost too much general ability. Also test with open-ended questions unrelated to your target task - like asking it to write a joke or explain a scientific concept.

Is LoRA better than full fine-tuning?

For most use cases, yes. LoRA uses 10,000 times fewer trainable parameters than full fine-tuning. It’s faster, cheaper, and preserves base model knowledge better. It’s now the default method for companies fine-tuning models on consumer-facing applications. Full fine-tuning is only needed if you’re training on a completely new domain with no pre-training overlap.

Can I use fine-tuning for multiple tasks at once?

Yes - but not by fine-tuning one model on all tasks together. That usually causes interference. Instead, use adapter fusion: train separate adapters for each task (e.g., one for customer service, one for legal analysis) and switch between them dynamically. This keeps each skill sharp without corrupting others.

Been running QLoRA on our legal bot for months now. No drop in MMLU scores, and we cut training time from 48 hours to under 6. The real win? No more midnight panic when a user asks about the capital of Finland and the bot freezes.

As someone who works with small teams in India, LoRA is a game-changer. We don’t have GPU clusters. With QLoRA, I fine-tune on a 3060 laptop. Base model stays intact. No magic, just smart engineering.

I just want to say… thank you. Seriously. This post made me feel less alone. I’ve been terrified that fine-tuning would erase the soul of these models. Like, what if they stop being curious? What if they stop being… human? I’ve been testing with haikus and jokes, and honestly? When it writes a good one, I cry a little. It’s not just accuracy. It’s presence. And LoRA? It keeps that presence alive. <3

Oh, so you’re telling me that mere mortals are now fine-tuning 70B models on consumer GPUs? How quaint. The real experts? They’re not tinkering with LoRA like it’s a LEGO set-they’re retraining from scratch on curated, cross-lingual, multi-modal corpora with differential privacy layers and adversarial validation loops. You’re not preserving knowledge-you’re just patching a leak with duct tape and wishful thinking.

THIS. IS. A. TRAGEDY. I just saw a model I loved-after fine-tuning it on my startup’s customer service data-start answering "What is love?" with "I cannot provide personal opinions." I cried. Not because it was wrong. But because it stopped trying. It stopped being brave. It stopped being alive. We’re not building tools. We’re killing voices.

You’re all missing the point. Benchmark transfer isn’t about tests-it’s about trust. If your model can’t explain quantum entanglement after being trained on medical data, you didn’t fine-tune it-you brainwashed it. And if you’re not running HELM + MMLU + edge-case poetry prompts every single time, you’re not an engineer. You’re a risk-taker with a budget. Stop pretending.

lol i just tried asking my fine-tuned bot "how do u spell reciept?" and it said "receipt is spelled r-e-c-e-i-p-t" and then added "btw here’s a haiku about spelling: letters twist and turn / mistakes are just detours / wisdom finds the way"... i love it. keep the weirdness.

Just wanted to say thank you for this post-it’s so clear and kind. I’ve been scared to fine-tune because I didn’t want to lose the magic. But now I’m gonna try QLoRA with Axolotl tomorrow. I know I’ll mess up the settings. I always do. But I’ll test it with a joke and a haiku. And if it still makes me smile? That’s the real benchmark.

Let’s be real-LoRA is just a band-aid. You’re not preserving knowledge; you’re just freezing it in amber. True transfer requires dynamic parameter modulation, cross-task regularization, and latent space alignment. If you’re not using adapter fusion with entropy-based routing and a dual-head contrastive loss, you’re not even in the game. And if you think a haiku is a valid benchmark? You’re not an AI practitioner-you’re a poet with a GPU.