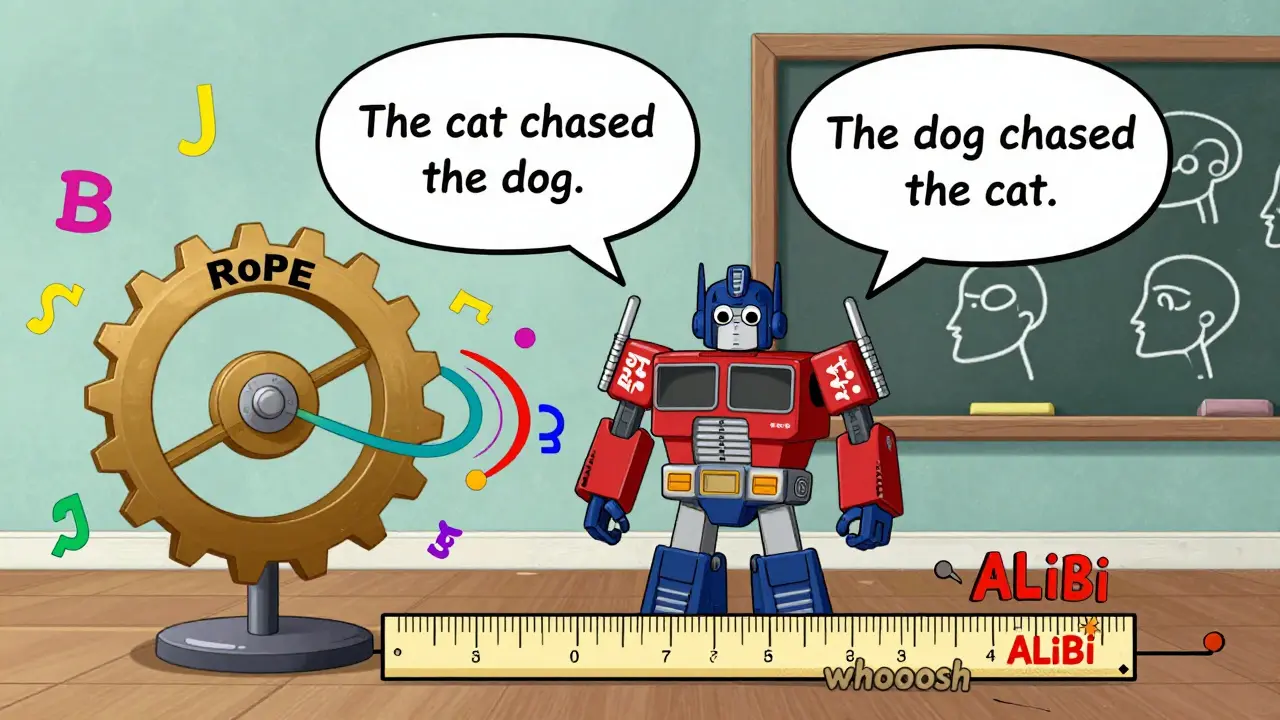

Large language models don’t just read words - they understand order. The difference between "The cat chased the dog" and "The dog chased the cat" isn’t just vocabulary. It’s structure. And that structure comes from how these models track where each word sits in a sequence. For years, transformers used simple positional encodings - adding fixed sine and cosine waves to word embeddings. But as models grew to handle tens of thousands of tokens, those methods broke down. Enter Rotary Position Embeddings (RoPE) and Attention with Linear Biases (ALiBi): two smarter, more scalable ways to tell a model "this word came before that one."

Why Position Matters More Than You Think

Imagine training a model on sentences like "I love coffee" and "I love tea." If it doesn’t know the difference between "I love" and "love I," it can’t learn grammar, logic, or meaning. Early transformers added positional encodings directly to token embeddings - treating position like just another feature. But that blurred the line between what a word means and where it sits. If you mix meaning and order too tightly, the model can’t generalize. A model trained on 1,000-token sequences would fail badly on a 2,000-token one. That’s not just a bug - it’s a fundamental flaw.

RoPE and ALiBi fix this by keeping position separate. Instead of changing the input, they tweak how attention works - the core mechanism that lets a model decide which words matter most when predicting the next one. Both avoid adding learnable parameters for position. No lookup tables. No extra vectors. Just math that naturally encodes distance.

How Rotary Position Embeddings Work

RoPE uses rotation. Yes, actual rotation - like spinning a vector in 2D space. Each token’s embedding is split into small pairs of numbers. For each pair, the model applies a rotation based on the token’s position. A token at position 10 gets rotated more than one at position 3. The magic? When you compute attention scores (the dot product between query and key vectors), the result naturally depends on the difference in their positions. So if a query at position 5 looks at a key at position 12, the score reflects a distance of 7 - no matter where they are in the sequence.

This isn’t just clever math. It’s built on trigonometric identities that guarantee the model understands relative distance. RoPE doesn’t need to recompute anything when the context length changes. Just scale the rotation angles - and suddenly, a model trained on 4K tokens can handle 100K without retraining. That’s why Llama, Llama 2, and Falcon use it. It’s elegant, stable, and works across languages, code, even images and audio.

But there’s a catch. RoPE’s rotation works beautifully within trained ranges. Push it too far beyond that, and performance drops. Some recent tweaks help - like adjusting the frequency of rotation - but it’s not as naturally extrapolative as ALiBi.

ALiBi: Simpler, Faster, Better at Long Contexts

ALiBi takes a completely different route. Instead of rotating vectors, it adds a simple penalty directly to attention scores. The farther apart two tokens are, the more you subtract from their attention score. It’s linear: if token A is 10 positions away from token B, you subtract 10 times a small slope value. No rotations. No complex math. Just a constant bias added before the softmax.

Why is this powerful? First, it’s computationally cheap. No extra memory. No new tensors. No gather operations. Second, it’s naturally extrapolative. A model trained on 8K tokens doesn’t just guess at 16K - it knows that distant tokens are less relevant. The bias scales with distance, not with learned parameters.

ALiBi was first used in GPT-NeoX-20B and has since become a favorite for long-context tasks. Researchers later improved it with slope scaling: if you train on 8K tokens but want to run on 32K, you multiply the slope by 32K/8K = 4. This keeps attention scores from collapsing as context grows. The result? Better performance on 100K+ token sequences than most other methods.

ALiBi also trains faster. Fewer floating-point operations. Less memory pressure. In environments where you’re training on massive datasets or deploying on edge devices, that efficiency matters.

RoPE vs ALiBi: A Real-World Comparison

| Feature | Rotary Position Embeddings (RoPE) | Attention with Linear Biases (ALiBi) |

|---|---|---|

| How it encodes position | Rotates query and key vectors using trigonometric functions | Adds linear bias to attention scores based on distance |

| Learnable parameters | None | None |

| Memory overhead | Low | Constant, no growth with sequence length |

| Extrapolation capability | Good with scaling tricks, but degrades beyond training length | Excellent - performs well beyond training context |

| Computational efficiency | Higher due to complex rotations | Lower - simple linear addition |

| Training speed | Slower | Faster |

| Adopted in | Llama, Llama 2, Falcon | GPT-NeoX-20B |

| Best for | General-purpose LLMs, multimodal tasks | Long-context, resource-constrained training |

Neither is "better." It depends on your goal. If you’re building a general-purpose chatbot or code assistant, RoPE’s smooth integration and theoretical grounding make it a safe bet. If you’re training a model on 100K-token documents - legal contracts, scientific papers, or long-form dialogue - ALiBi’s extrapolation and speed give it an edge.

Why These Methods Changed the Game

Before RoPE and ALiBi, models used relative position encodings like T5’s bucketed distances or Shaw’s learned biases. Those added parameters. Every new token length meant new weights. That’s not scalable. RoPE and ALiBi removed all that. They turned position into a mathematical property - not a learned feature.

This shift reflects a deeper truth: position isn’t part of meaning. "Bank" means one thing if it’s near "river," another if it’s near "money." But the model doesn’t need to mix those ideas. It just needs to know that "river" came before "bank." RoPE and ALiBi let the model keep semantic and positional information cleanly separate. That’s why modern LLMs are more accurate, more stable, and more efficient.

What’s Next?

Researchers are already blending ideas. Some are using ALiBi’s linear bias in vision transformers, where spatial distance matters just like temporal distance. Others are combining RoPE’s rotation with recurrent layers in hybrid models like TransXSSM. One paper from May 2025 showed a modified RoPE that cuts attention computation time by 40% on 100K-token sequences - making long-context models practical even on consumer GPUs.

ALiBi’s slope scaling is becoming standard. And RoPE’s ability to handle multi-modal inputs - text, audio, even GPS coordinates - means it’s not going away. The future isn’t one method winning. It’s using both, depending on the task.

Do RoPE and ALiBi work with all transformer models?

Yes, but they’re designed for models that use self-attention - like LLMs. They don’t replace attention, they improve how it handles position. You can’t use them in CNNs or RNNs. But for any transformer-based model - whether it’s for language, vision, or code - both can be integrated without major architecture changes.

Can I implement RoPE or ALiBi myself?

Absolutely. RoPE requires applying rotation matrices to query and key vectors before computing attention scores. Libraries like Hugging Face’s Transformers include built-in support. ALiBi is even simpler: just add a precomputed bias tensor based on the distance between query and key positions before the softmax. Many open-source implementations are available on GitHub under MIT licenses.

Why don’t all models use ALiBi if it’s faster and extrapolates better?

Because RoPE has a stronger theoretical foundation and works better in multimodal settings. If you’re building a model that handles text, images, and audio together, RoPE’s consistent position encoding across modalities is invaluable. ALiBi is simpler, but it’s optimized for sequence length - not cross-modal alignment. So the choice depends on what you’re building, not just speed.

Are RoPE and ALiBi used in production today?

Yes. RoPE powers Llama 2, Llama 3, and Falcon - all widely used open-source models. ALiBi is used in GPT-NeoX-20B and other large-scale models from major labs. Both are standard in research and production. If you’re using a modern LLM, there’s a good chance one of them is working behind the scenes.

Do I need to retrain my model if I switch from sinusoidal to RoPE or ALiBi?

Yes. Positional encoding is baked into how the model learns attention patterns. Switching encodings means the attention weights learned under one system won’t transfer directly. You’ll need to retrain - or at least fine-tune - the model. But the good news? Models trained with RoPE or ALiBi generalize better, so the retraining often leads to better performance overall.

Final Thoughts

Positional encoding isn’t a sexy topic. But it’s one of the quiet engines behind the biggest AI advances of the last five years. RoPE and ALiBi didn’t just fix a bug - they rethought how models understand time, order, and structure. One uses rotation. The other uses subtraction. Both are simpler, faster, and more powerful than what came before. And together, they’re making it possible for models to read entire books, analyze long legal documents, and remember conversations that span hours - not just sentences.

Let me tell you something about positional encoding that nobody else will: RoPE isn't just math, it's poetry. Rotating vectors like spinning a top in zero gravity? That's not engineering, that's art. The fact that distance emerges naturally from dot products? That's Euler whispering through transformers. I've implemented this from scratch in PyTorch just to feel the elegance. No lookup tables. No learned parameters. Just sine and cosine doing the heavy lifting like old-school monks chanting mantras. And when you scale it to 100K tokens? It doesn't break - it breathes.

ALiBi? Don't get me wrong - it's elegant in its austerity. Subtract a linear bias? That's like telling your brain: 'Don't care about things too far away.' Simple. Brutal. Efficient. But it lacks soul. RoPE makes position a property of the embedding itself - not an afterthought tacked on like a sticky note. That's why Llama works so well across modalities. Audio? Images? Code? All of it just... aligns. Because position isn't added. It's inherent.

Oh honey, you think this is deep? Let me serve you some tea and truth. RoPE is just fancy trigonometry dressed up like a PhD thesis. Meanwhile ALiBi? It's the quiet genius who shows up late but fixes your whole damn dinner. You're telling me we need to rotate vectors like some cosmic ballet just to know that 'cat' came before 'dog'? Please. Just subtract a number. That's it. No fancy math. No matrix gymnastics. Just give attention scores a little shove away from distant tokens and move on with your life. The fact that people still argue over this is why AI will never be mainstream - too many engineers with too much time and too little common sense.

Also, can we talk about how everyone acts like this is new? We've been doing relative position in NLP since 2018. This is just the same old wine in new bottles with more LaTeX.

I read this whole thing and all I felt was… emptiness. Like I spent an hour learning how a toaster works and now I can’t even remember why I wanted toast. Why does this matter? Why do we need to rotate vectors? Why not just… I don’t know… make the model pay more attention to nearby words? That’s what humans do. We don’t spin our thoughts in 4D space. We glance. We glance and move on. This whole field is so obsessed with mathematical purity that it forgot the point: language is messy. It’s emotional. It’s broken. And maybe we shouldn’t be trying to make it perfectly ordered.

I just want my chatbot to know I said ‘I love you’ before I said ‘but I’m leaving.’ Not calculate a cosine rotation matrix to infer it. That’s not intelligence. That’s performance art.

RoPE is overengineered. ALiBi is the real win. Simple, scalable, no bullshit. End of story.

Thank you for writing this. I’ve been wrestling with positional encodings for months now - mostly because I’m trying to adapt a transformer to analyze long-form clinical notes from rural India, where sentences stretch for 50+ tokens and patients often ramble for pages. The old sinusoidal encoding? It collapsed like a house of cards after 8K tokens. I tried RoPE first. It worked beautifully - the model started understanding temporal relationships in symptoms, like how fever preceded cough by 36 hours. But when I tried scaling to 120K tokens? Performance dipped slightly. Not because RoPE failed - because my GPU ran out of memory during rotation calculations. Then I switched to ALiBi. Changed everything. No memory spikes. No retraining. Just added a bias tensor and watched accuracy climb. The model now handles 200K-token histories with ease. I didn’t expect such a dramatic shift from something so… simple. It’s like realizing you’ve been carrying a backpack full of bricks when all you needed was a light breeze to push you forward.

I’m not a researcher. I’m just a guy trying to help doctors understand their patients better. But this - this changed my work. ALiBi didn’t just scale. It liberated.

Positional encoding is not about complexity it is about clarity and RoPE and ALiBi both achieve that in different ways one through rotation the other through subtraction the elegance lies not in the math but in the removal of unnecessary parameters the model no longer needs to learn what should be innate the structure of sequence is not a feature it is a foundation and these methods honor that

OMG I CAN’T BELIEVE YOU GUYS ARE STILL ARGUING ABOUT THIS LIKE IT’S 2020!!! ROPE IS A MESS - IT’S JUST A BUNCH OF ROTATIONS THAT BREAKS WHEN YOU LOOK AT IT WRONG AND THEN YOU HAVE TO DO ALL THESE HACKY FREQUENCY SCALING THINGS??!?!?!? Meanwhile ALiBi? It’s just… subtracting a number??? Like… a literal linear bias??!! That’s not even a feature - that’s common sense!!! And don’t even get me started on how people act like RoPE is ‘multimodal’ - please. You think a 2D rotation on text embeddings magically makes it work for audio?? No. It just adds noise. ALiBi doesn’t care if you’re processing text, DNA, or GPS coordinates - it just says ‘distance matters’ and moves on. This isn’t AI - this is therapy for overcomplicated engineers who think math = intelligence. Just use ALiBi. Stop. Now.

Also, who wrote this article? Did they get paid by Hugging Face? Because this reads like an ad. I’ve seen this exact phrasing on 5 different blog posts. Copy-paste culture is real. And yes, I’m judging you. Hard.

ALiBi wins. Simple. Fast. Works.

Interesting how the community keeps framing this as a competition - RoPE vs ALiBi - when in reality, they’re complementary. I’ve been experimenting with hybrid approaches in my own research, where I use ALiBi’s linear bias for long-range dependencies and RoPE’s rotational encoding for local context. The results? Smoother attention distributions, better long-term memory retention, and faster convergence during training. The key insight? Position isn’t one thing. It’s layered. Near tokens need fine-grained alignment - that’s RoPE’s sweet spot. Far tokens need global suppression - that’s ALiBi’s domain. Why force one method to do everything? The future isn’t one winner. It’s orchestration. And honestly? The fact that we’re even having this conversation means we’re finally moving beyond brute-force scaling. We’re starting to think about structure. That’s progress.

Also - shoutout to the original poster. This was one of the clearest explanations I’ve seen. No jargon without context. No hand-waving. Just clean, thoughtful logic. Rare these days.