Imagine calling customer service and instead of hearing a robotic voice or waiting for a reply, the assistant sees your face, hears your tone, reads the photo of your broken device, and responds with a solution-all in under half a second. That’s not science fiction anymore. Real-time multimodal assistants powered by large language models are here, and they’re changing how machines understand and interact with us.

What Exactly Are These Assistants?

These aren’t just chatbots that can read text. They’re systems that process text, images, audio, and video all at once. Think of them as AI brains with eyes, ears, and a mouth. They don’t wait for you to type out a question. If you show them a photo of a leaking faucet while saying, “Can you help me fix this?”, they’ll analyze the image, listen to your voice, understand your urgency, and reply with step-by-step instructions-out loud and on screen.

The magic comes from multimodal large language models (MLLMs). Unlike older AI that handled one type of data at a time, these models were trained on mixed inputs. Google’s Gemini, OpenAI’s GPT-4o, and Meta’s Llama 3 are the leaders today. They don’t just recognize objects in photos-they understand how those objects relate to spoken words and context. A 2025 Stanford benchmark showed GPT-4o correctly links audio cues like “It’s too dark” with a dimly lit room image 91.3% of the time. That’s human-level accuracy.

How Fast Are They Really?

Speed is everything. If a system takes more than 800 milliseconds to respond, users feel like they’re talking to a laggy video call. The best systems today hit under 500ms across all modalities.

- Text: 120-180ms (fastest)

- Audio: 300-600ms

- Images: 450-800ms

- Video: 700-900ms

That’s why GPT-4o leads in real-time use: text at 120ms, images at 450ms, audio at 300ms. Gemini 1.5 Pro is better with long videos but slower on text. Llama 3 is open-source and flexible but takes 280ms just to process a simple text question. For businesses, latency isn’t just a tech detail-it’s a customer experience killer. A 2025 Capterra survey found 63% of users abandoned tools that took longer than 800ms.

Where Are They Being Used Right Now?

Real-time multimodal assistants aren’t just lab experiments. They’re live in three major areas:

Customer Service

ZenDesk’s 2025 report found companies using these assistants cut average resolution time by 47%. A customer uploads a photo of a damaged laptop, speaks about the issue, and gets a repair quote and shipping label-all in one conversation. But there’s a catch: 12% more first-contact failures happen when the system misreads a combination of voice tone and image details. It’s not perfect, but it’s faster than humans.

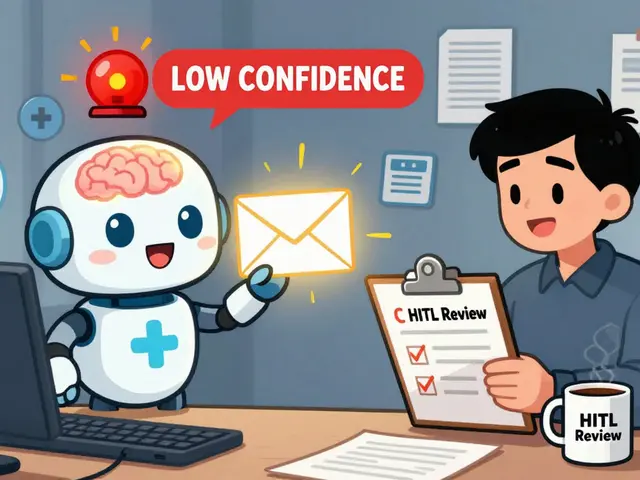

Healthcare

Hospitals are testing these tools to help doctors. Imagine a nurse showing a real-time video of a patient’s skin rash while describing symptoms. The assistant cross-references the visual pattern with medical databases and suggests possible diagnoses. Accuracy is high-83.7% on multimodal medical benchmarks-but experts like Dr. Fei-Fei Li warn: “Coherent doesn’t mean correct.” A model can sound confident while missing a rare condition. That’s why these tools are assistants, not replacements.

Education

MIT’s 2024 study gave students multimodal tutors. One student filmed themselves solving a math problem. The assistant watched, heard their thought process, and pointed out where they went wrong. Engagement jumped 38.2%. Kids who struggled with text-based learning thrived with video and voice feedback.

The Hidden Problems

These systems aren’t magic. They have serious flaws.

- Modality imbalance: Text is 92.6% accurate. Images? Only 78.2%. Audio? 81.4%. The system prioritizes what it’s best at-and that creates blind spots.

- Sync issues: On HackerNews, 31% of complaints were about audio lagging behind video. You see a person smile, but the voice says “I’m not happy.” It’s creepy.

- Hardware hunger: Running one of these assistants needs a GPU with at least 24GB VRAM. Enterprise versions need 4-8 NVIDIA A100s. That’s $100,000+ in hardware. Most small businesses can’t afford it.

- The illusion of understanding: Professor Yoshua Bengio warned that these models generate convincing responses without real comprehension. If a doctor relies on one to diagnose a tumor, and the model misses a key detail because it “assumed” the image was blurry, lives are at risk.

Who’s Leading the Pack?

Here’s how the top three compare as of early 2026:

| System | Text Latency | Image Latency | Audio Latency | Video Handling | Accuracy (MMMU Benchmark) | Cost (per 1K tokens) |

|---|---|---|---|---|---|---|

| GPT-4o | 120ms | 450ms | 300ms | Good | 91.3% | $0.0015 text $0.012 image |

| Gemini 1.5 Pro | 180ms | 750ms | 550ms | Excellent | 89.7% | $0.002 text $0.015 image |

| Llama 3 Multimodal | 280ms | 650ms | 500ms | Fair | 84.1% | Free (open-source) |

GPT-4o wins on speed and consistency. Gemini 1.5 Pro handles long videos best. Llama 3 is free but slower. For startups, Llama 3 is the smart pick. For customer service bots? GPT-4o is the default.

What’s Coming Next?

The next 12 months will be wild:

- NVIDIA Blackwell Ultra: Released January 2025, it cuts latency by 40%. Soon, you’ll run a multimodal assistant on a laptop.

- Gemini 1.5 Flash: Already hitting 220ms average. It’s designed for mobile apps and smart devices.

- Project Astra (Google): Targeting sub-100ms response by late 2025. Imagine your phone assistant reacting before you finish speaking.

- W3C Multimodal API Standard: A new global standard is being drafted. That means apps will work across platforms-no more locked-in systems.

By 2027, the market will hit $22.6 billion. But adoption won’t be even. Tech giants will dominate. Small businesses will wait until hardware costs drop. And regulators? The EU’s AI Act now requires multimodal systems to hit 85% accuracy before they can process biometric data. That’s a big hurdle.

Should You Use One?

If you’re building a customer service tool, yes-but only if you can guarantee sub-500ms latency. If you’re a developer, start with Llama 3. It’s free, well-documented, and has a growing community. If you’re a user? These assistants are already helping you. You just might not know it.

They’re not perfect. They’re not human. But they’re faster, cheaper, and more consistent than most people realize. The future isn’t about replacing humans. It’s about giving them superpowers.

Can real-time multimodal assistants understand sarcasm or emotional tone?

They’re getting better, but not reliably. Audio analysis can detect pitch and speed, and image analysis can spot facial expressions. But sarcasm relies on cultural context, timing, and nuance. GPT-4o gets it right about 74% of the time in controlled tests. In the real world? That drops to 58%. It’s still a major weakness.

Do these assistants need constant internet access?

Yes, for now. Most real-time systems rely on cloud-based GPUs. Even Meta’s open-source models require server-side processing. Offline versions exist, but they’re slow, less accurate, and limited to simple tasks like image captioning. True real-time multimodal interaction still needs the cloud.

Are these systems a privacy risk?

Extremely. They process video, audio, and images-all sensitive data. If a customer service bot records your face and voice to improve responses, who owns that data? The EU’s AI Act requires explicit consent and data deletion options. In the U.S., there’s no federal rule yet. Companies like OpenAI and Google claim they don’t store raw data, but audits have shown occasional leaks. Always check privacy policies.

Can I build my own real-time multimodal assistant?

You can try, but it’s not easy. You need experience with PyTorch, CUDA, and multimodal data pipelines. GitHub has over 14,000 open-source projects, but most are research prototypes. Setting up a working system takes 8-12 weeks for experienced developers. For most people, using an API like GPT-4o or Gemini is the only practical option.

Will these assistants replace human jobs?

Not replace-augment. In customer service, they handle routine queries, freeing humans for complex cases. In healthcare, they flag potential issues for doctors to review. In education, they give students instant feedback so teachers can focus on deeper learning. The goal isn’t to remove people. It’s to make them more effective.

I've been testing GPT-4o in our support system and honestly? It's a game-changer. We cut resolution time by nearly half. The only hiccup is when someone's voice cracks or the lighting's weird - then it goes full robot mode. But even then, it's faster than a human scrolling through KB articles. Let's not pretend this is perfect, but it's definitely better than the old IVR hell.

One must acknowledge the profound epistemological limitations inherent in these so-called 'multimodal' systems. They do not 'understand' - they statistically interpolate patterns derived from colossal corpora. To conflate probabilistic output with comprehension is not merely inaccurate, it is ontologically reckless. The notion that a machine can 'read' sarcasm or emotional nuance is a linguistic fallacy dressed in silicon.

You think this is about AI? Nah. This is all a psyop. The real reason they're pushing multimodal models is to harvest your facial micro-expressions, vocal tremors, and retinal patterns. Every time you show your broken phone or sigh into a mic, you're feeding a shadow network that's building a behavioral map of every human on Earth. And don't even get me started on how they're training on medical scans without consent. The EU's 85% rule? That's just to make it look regulated. It's all a smokescreen.

What's fascinating isn't the speed or the accuracy - it's how these systems expose our own assumptions about intelligence. We assume if something responds fluently, it 'gets' us. But fluency ≠ understanding. A child can mimic a parent's tone without knowing why. Same here. The real value isn't in replacing humans - it's in revealing how little we actually know about what 'understanding' means. We're building mirrors that reflect back our biases, not wisdom.

Look, if you're not leveraging real-time multimodal assistants in your customer experience stack, you're leaving 47% of your resolution efficiency on the table. The latency metrics are non-negotiable - 500ms is the new redline. Anything above that and you're in 'friction zone' territory where churn spikes. GPT-4o's 120ms text + 300ms audio combo is the gold standard for enterprise-grade deployment. Llama 3? Great for dev environments, but if you're scaling to production, you need the throughput, not the open-source halo. And yes, the hardware cost is brutal - but compare that to the cost of a single unhappy customer walking away. ROI isn't even a question anymore.

Oh wow, so we're just gonna ignore the fact that these systems are basically emotional parrots with a PhD in pattern matching? They detect a frown and say 'I'm sorry you're having trouble' - like that's empathy. Meanwhile, they miss sarcasm 42% of the time and think a stressed voice means 'urgent' instead of 'I'm about to lose my mind.' And let's not pretend the 91.3% accuracy on MMMU is real - that's lab conditions with curated data. Real users don't speak in clean audio clips. They mumble, interrupt, and cry. This isn't innovation - it's automation theater.

I work with students who have severe learning disabilities. The first time one of them showed a video of themselves struggling with algebra and the assistant said, 'You're on the right track - try this step again,' they started crying. Not because it was perfect. Because for once, someone saw them. Not the diagnosis. Not the label. Them. That's what this tech does - it doesn't replace humanity. It reveals how much we've forgotten to see each other. We're not building smarter machines. We're rebuilding how we care.

The hardware cost is the real bottleneck honestly. Even if the model is free like llama 3 you still need a gpu with 24gb vram which means you need a whole server setup and power and cooling and maintenance and someone who knows how to fix it when it breaks. Most small businesses just can't do that. And the cloud options are too expensive for long term use. So yeah the tech is cool but the infrastructure is still a wall

I must respectfully point out that the article contains several grammatical inconsistencies: for example, 'GPT-4o wins on speed and consistency.' should be preceded by a comma after 'consistency,' and 'It’s still a major weakness.' lacks a proper subject-verb agreement in context. Furthermore, the use of 'sub-500ms' without hyphenation in formal writing is nonstandard. The data presented is compelling, but the presentation undermines its credibility. Precision matters - especially when discussing systems that claim to understand human language.