You build a Retrieval-Augmented Generation system. You connect it to your company’s internal documents. You expect it to answer questions accurately without leaking secrets. Instead, you find customer names, social security numbers, and financial records sitting right there in the prompt sent to a third-party Large Language Model. This is not a hypothetical nightmare; it is the reality for most early adopters of enterprise AI.

According to a May 2024 analysis by Lasso Security, a staggering 68% of initial RAG Retrieval-Augmented Generation systems that combine external knowledge with LLMs implementations exposed sensitive data through unredacted prompts or source documents. The gap between raw power and secure deployment is widening. That is where Privacy-Aware RAG A specialized implementation of RAG designed to prevent sensitive data exposure when integrating external knowledge sources with LLMs comes into play. It is not just a nice-to-have feature anymore. With regulations like the EU AI Act enforcing privacy-by-design by Q3 2025, this architecture has become the baseline for any serious enterprise AI strategy.

The Core Problem: Why Standard RAG Fails Privacy Checks

To understand why we need a new approach, look at how standard RAG works. You take a user query. You search a vector database for relevant chunks of text. You send both the query and those chunks to an LLM API. The model reads everything and generates an answer. Simple? Yes. Secure? Absolutely not.

In a standard setup, 100% of the retrieved document content goes straight to the LLM provider. If that document contains a patient record, a trade secret, or a credit card number, it leaves your controlled environment. Dr. Sarah Robinson, Principal AI Security Researcher at Palo Alto Networks, calls this out clearly. She notes that RAG implementations without privacy safeguards represent one of the fastest-growing attack vectors in enterprise AI. In fact, 43% of breaches in 2023 originated from unsecured RAG pipelines.

The issue is not just about malicious hackers. It is about accidental exposure. A support agent asks a question about a client. The system retrieves a contract containing the client's full address and phone number. That data travels across the internet to a cloud server owned by someone else. Once it is there, you lose control over who sees it, how long it is stored, or whether it gets used to train future models. Privacy-Aware RAG fixes this by inserting a filter layer that strips sensitive information before it ever touches the LLM.

Two Architectural Approaches to Privacy-Aware RAG

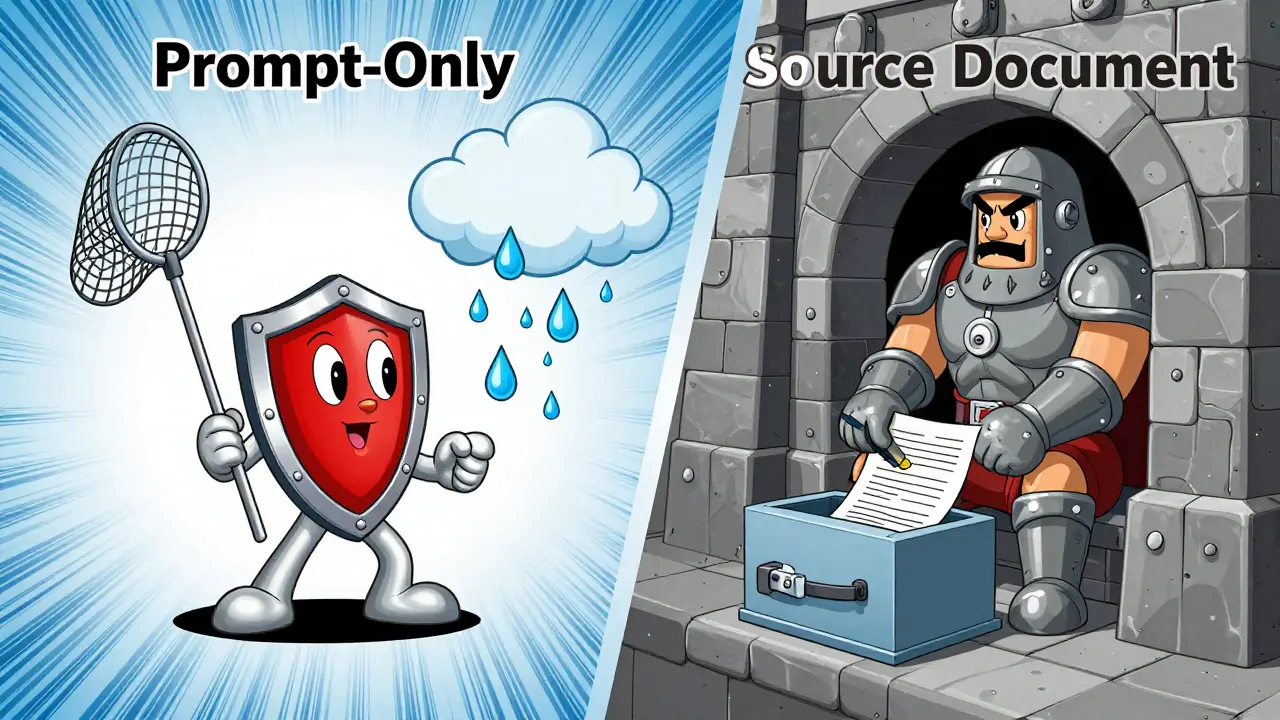

When building these systems, you generally have two paths. Each has different speed, cost, and accuracy implications. Knowing which one fits your use case saves months of debugging.

| Feature | Prompt-Only Privacy | Source Documents Privacy |

|---|---|---|

| Processing Type | Online, real-time | Offline batch process |

| Latency Impact | Adds 150-300ms per transaction | Reduces real-time latency by 35-50% |

| Storage Overhead | None | Increases storage needs by 20-40% |

| Data Exposure Risk | Raw data exists in embeddings | 0% raw sensitive data in embeddings |

| Best For | Low-sensitivity docs, high-speed queries | Highly regulated data (HIPAA, PCI) |

Prompt-only privacy operates as a real-time shield. As defined in Private AI's December 2023 guide, this method redacts sensitive information from input prompts immediately before transmission to LLM APIs. It processes the query and retrieval results on the fly. Benchmark tests by 4iApps in March 2024 show it handles transactions at speeds of 150-300ms. This is fast enough for most chat interfaces. However, the original sensitive data still sits in your vector database. If someone compromises the database, they get the raw secrets.

Source documents privacy takes a harder stance. It functions as an offline batch process. You redact sensitive information from source documents before embedding and storage. This means the vector database itself never holds the raw sensitive data. Salesforce's Q2 2024 technical assessment showed this reduces real-time processing latency significantly because the heavy lifting happens during ingestion, not querying. The trade-off is storage. You need 20-40% more capacity due to additional metadata layers that track what was removed and where. But for industries handling Protected Health Information (PHI) or Payment Card Industry (PCI) data, this extra space is worth the peace of mind.

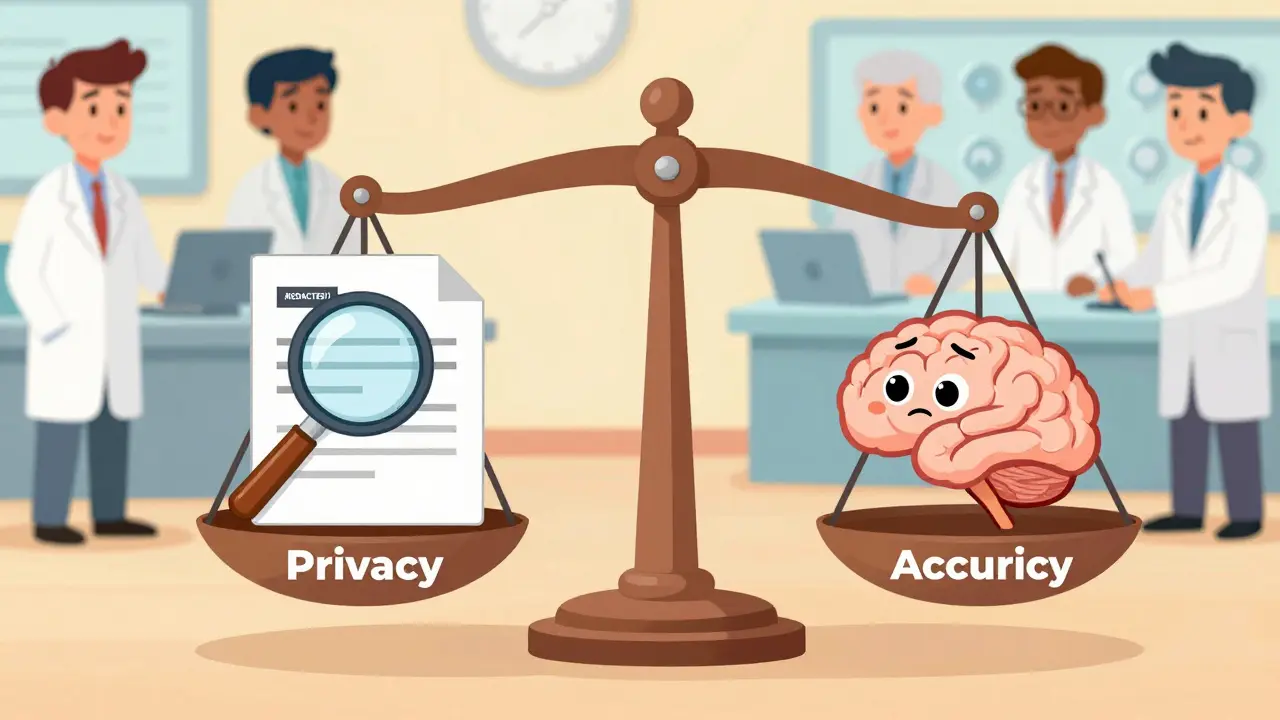

Accuracy vs. Privacy: The Trade-Off Reality

You cannot have perfect privacy and perfect accuracy simultaneously. Not yet. Removing context removes signal. When you strip a name or a number from a sentence, you might also remove the clue the LLM needed to answer correctly.

Standard RAG achieves about 92.3% factual accuracy in enterprise knowledge retrieval tasks. Privacy-Aware RAG with aggressive redaction settings drops to 88.7%. That sounds bad, but the gap narrows. Google Cloud's November 2024 case study with healthcare clients showed that with optimized redaction thresholds, the accuracy difference shrinks to just 2.1%. The key is tuning. You do not want to redact every noun. You want to redact Personally Identifiable Information (PII) while keeping the semantic meaning intact.

Professor David Kim of MIT's AI Lab warns about the dangers of going too far. He cautions that over-redaction creates new hallucination risks. When the LLM receives insufficient context, it starts guessing. In complex query scenarios, this can increase factual errors by up to 18%. So, the goal is not maximum redaction. The goal is precise redaction. You need tools that distinguish between "John" (a common name, maybe safe depending on context) and "John's SSN is 123-45-6789" (definitely unsafe).

Key Components of a Secure Pipeline

Building this requires more than just adding a plugin. You need a layered defense strategy. Here are the critical components that must work together:

- Dynamic Data Masking: This is the engine. K2View's architecture specifies a requirement for 99.98% accuracy in PII detection to meet compliance standards. You need models trained specifically on your domain data. Generic detectors fail on edge cases like medical record numbers or custom IDs.

- Role-Based Access Controls (RBAC): Not everyone should see the same data. Gartner's August 2024 survey found that 78% of enterprise RAG systems implement RBAC to restrict data access based on user permissions. A junior analyst should not retrieve executive compensation details even if their query semantically matches.

- Vector Database Encryption: Even if you redact prompts, your database is a target. Palo Alto Networks identified that encrypting vector databases reduces unauthorized data access by 87%. Use providers like Pinecone or Weaviate that offer built-in encryption at rest and in transit.

- Contextual AI Redaction: Rule-based systems miss nuance. Hybrid approaches combine rule-based redaction for structured data (achieving 99.95% accuracy for credit card numbers) with contextual AI redaction for unstructured text (87.4% accuracy). This combination covers both obvious patterns and hidden meanings.

Implementation Challenges and Real-World Costs

Let us talk about the hard part. Getting this right is not cheap, and it is not fast. Organizations report 8-12 weeks of dedicated effort for initial implementation. You need skills in NLP, data security, and LLM operations. Job postings for RAG implementation roles cite proficiency with frameworks like LangChain or LlamaIndex in 76% of cases. Knowledge of differential privacy appears in 42% of listings.

Consider the case of a global insurance company documented in Salesforce's December 2024 customer report. They deployed Privacy-Aware RAG across 12,000 agents. The result? 99.8% PII protection while maintaining 89% answer accuracy. Impressive. But the implementation took six months of development and cost $385,000 in customization. That is the price of doing business securely in 2026.

Failures happen when companies cut corners. A healthcare provider suffered a HIPAA violation in Q2 2024 after insufficient redaction of medical record numbers affected 14,000 patients. The HHS Office for Civil Rights released enforcement actions against them in August 2024. The lesson is clear: validation is non-negotiable. Forrester's senior analyst Maria Chen warned that organizations implementing Privacy-Aware RAG without proper validation risk creating false confidence. In their Q3 2024 assessment, 61% of tested solutions failed to catch edge-case PII exposures.

Market Trends and Future Outlook

The market is moving fast. IDC estimates the broader enterprise AI market at $14.2 billion in October 2024. The specific Privacy-Aware RAG segment is projected to reach $2.8 billion by 2026, growing at a 63% CAGR. Financial services lead adoption at 58%, followed by healthcare at 47%. Retail and manufacturing lag behind, likely due to lower regulatory pressure and budget constraints.

Competitive landscape analysis shows three dominant players. Embedding service providers like OpenAI and Cohere add privacy features to their APIs, holding 45% market share. Specialized privacy startups like Private AI and Lasso Security hold 28%. Enterprise platform vendors like Salesforce and Google Cloud integrate these features into broader suites, taking the remaining 27%.

Looking ahead, standardization is coming. NIST is working on RAG-specific privacy guidelines expected in Q2 2025. The IETF formed a working group on 'Privacy-Preserving Retrieval Protocols' in September 2024. However, challenges remain. Handling multilingual PII is tough; current tools achieve only 76.4% accuracy for non-English content according to Meta's October 2024 evaluation. And fine-tuning remains a blind spot. 68% of organizations still struggle to maintain privacy during the fine-tuning process.

G analysts predict that by 2026, 85% of enterprise RAG deployments will incorporate privacy-preserving techniques, up from just 32% in 2024. This shift is driven largely by regulation. 73% of implementations cite regulatory compliance as the primary driver. Cost reduction and brand protection follow as secondary factors.

What is the difference between Prompt-Only and Source Documents Privacy?

Prompt-Only privacy redacts sensitive data in real-time right before sending the query to the LLM. It is faster but leaves raw data in your vector database. Source Documents privacy redacts data offline before storing it in the vector database. This ensures zero raw sensitive data exists in the embeddings, offering higher security but requiring more storage and upfront processing time.

Does Privacy-Aware RAG reduce the accuracy of AI answers?

Yes, slightly. Standard RAG achieves about 92.3% factual accuracy, while Privacy-Aware RAG with aggressive redaction drops to 88.7%. However, with optimized redaction thresholds, this gap can narrow to just 2.1%. The key is balancing redaction precision to avoid removing necessary context while protecting sensitive identifiers.

How much does implementing Privacy-Aware RAG cost?

Costs vary widely based on scale. A global insurance company reported spending $385,000 in customization costs over six months for a deployment covering 12,000 agents. Smaller implementations may cost less, but expect significant investment in skilled labor, as teams require expertise in NLP, data security, and LLM operations. Initial implementation typically takes 8-12 weeks.

Which industries are leading in Privacy-Aware RAG adoption?

Financial services lead with 58% adoption, driven by strict regulations like FINRA and PCI DSS. Healthcare follows closely at 47% due to HIPAA requirements. Government agencies are at 39%. Retail and manufacturing lag behind at 22% and 18% respectively, often due to lower immediate regulatory pressure compared to finance and health.

Can I use open-source tools for Privacy-Aware RAG?

You can, but documentation quality varies. Open-source toolkits average a 3.2/5 rating on GitHub for clarity. Commercial solutions like Private AI receive higher marks (4.6/5) for support and documentation. Using open-source frameworks like LangChain or LlamaIndex is common, but you will likely need to build custom entity recognition models to handle domain-specific PII effectively.

What are the biggest risks of not using Privacy-Aware RAG?

The primary risk is data leakage. Without safeguards, sensitive data like PII, PHI, or trade secrets can be exposed to third-party LLM providers. This leads to compliance violations (GDPR, HIPAA), potential fines, reputational damage, and increased vulnerability to attacks. Studies show 43% of recent AI-related breaches originated from unsecured RAG pipelines.

How effective are current tools at detecting non-English PII?

Current performance is limited. According to Meta's October 2024 evaluation, existing tools achieve only 76.4% accuracy for non-English content. This makes multilingual deployments challenging and highlights the need for specialized training on diverse language datasets to improve detection rates outside of English.