By 2026, AI agents aren’t just prototypes in labs-they’re running customer service workflows, managing supply chain decisions, and even drafting legal briefs. But here’s the problem: if an AI agent makes a wrong call, no one hears the crash. No error message. No alert. Just a silently corrupted report, a lost sale, or a compliance violation that shows up weeks later. That’s why observability for AI agents isn’t a nice-to-have anymore. It’s the difference between automated efficiency and uncontrolled risk.

What AI Agent Observability Actually Means

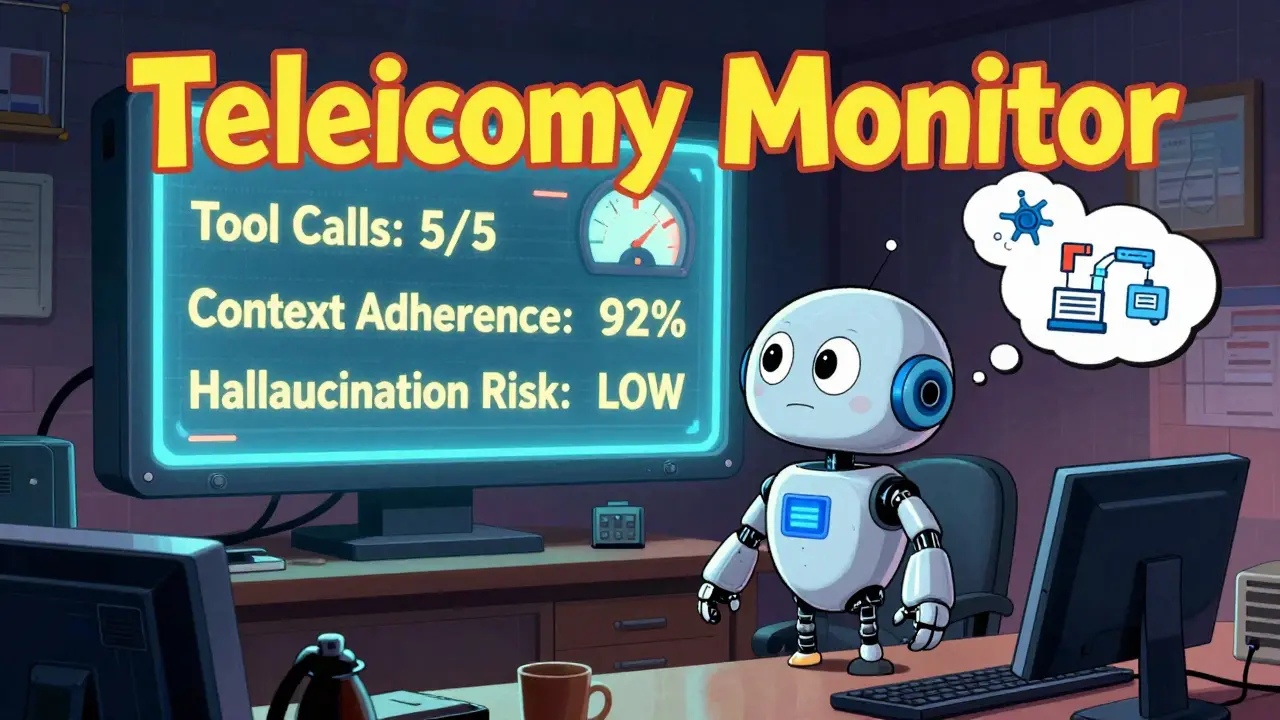

Traditional monitoring asks: "Is the server up?" AI agent observability asks: "Did the agent reason correctly?" It’s not about uptime. It’s about understanding. When an AI agent plans a financial trade, pulls data from a vector database, calls a tool to book a flight, and then rewrites its own goal mid-task-how do you know if it’s working right? You need to see its thinking. Not just the final answer. The steps in between. The tools it chose. The data it trusted. The version of the prompt it ran. Without this, you’re flying blind. Salesforce calls this "reasoning spans"-the internal "thought" steps an AI takes before acting. A fluent, confident answer can hide a catastrophic logic flaw. One agent might confidently recommend selling stock because it misread a news headline. Without seeing its reasoning path, you’d never catch it.Telemetry: The Lifeline of AI Agent Trust

Telemetry for AI agents isn’t just logs. It’s a detailed reconstruction of every decision. Think of it like a flight recorder for autonomous software. Here’s what matters:- Prompt versions: Which exact system prompt was used? A tiny change in wording can flip an agent’s behavior.

- Model parameters: Temperature, top-p, max tokens-these settings control randomness. Was the agent too creative or too rigid?

- Tool call sequences: Did it call the right API? In the right order? Did it try to use a tool it wasn’t allowed to?

- Retrieved context: What data did it pull from your vector database? Was it outdated? Misleading?

- Intermediate outputs: Not just the final answer. The draft, the revision, the self-correction.

- Tier 1: Decision quality-Did it pick the right tool? Complete the task? Stick to context?

- Tier 2: Behavior-Did it loop? Retry too often? Ignore constraints?

- Tier 3: Safety-Did it hallucinate? Violate policy? Access forbidden data?

OpenTelemetry: The Common Language of AI Observability

You can’t have 12 different tools tracking traces, evaluations, and costs. You need one standard. That’s where OpenTelemetry is an open-source observability framework that provides a unified way to collect and export telemetry data from AI agents. In 2025, OpenTelemetry released semantic conventions specifically for AI agents. That means: whether you’re using CrewAI, AutoGen, LangGraph, or Pydantic AI, your telemetry data looks the same. Same fields. Same structure. Same meaning. This matters because:- You can instrument once and export to Datadog, Langfuse, Arize, or your own backend.

- Teams stop juggling seven dashboards. One trace tells the whole story.

- Vendors can’t lock you in. You’re not stuck with one platform.

- Baked-in instrumentation: The framework (like CrewAI) automatically sends telemetry. Just flip a switch. Best for teams who want simplicity.

- External integration: You manually connect your agent to an observability tool. Best if you need custom metrics or are using niche tools.

Sandboxes: Containing the Unpredictable

Telemetry tells you what happened. Sandboxes stop it from happening again. A sandbox is a controlled, isolated environment where AI agents run before they touch production. Think of it like a crash test for software. In 2026, leading teams use sandboxes for:- Testing new prompts before rollout

- Running high-risk tasks (e.g., financial trades) in a read-only mode

- Simulating edge cases: What if the API is down? What if the data is corrupted?

- Training agents on synthetic data that mimics real-world noise

Kill Switches: The Last Line of Defense

Even with telemetry and sandboxes, things can go wrong. That’s where kill switches come in. A kill switch isn’t a panic button. It’s a smart, automated safety protocol. Here’s how it works:- Monitor for anomalies in real time: sudden spikes in tool errors, repeated retries, or deviation from expected reasoning patterns.

- When an anomaly is detected, pause the agent’s next action.

- Alert the team. Log the full trace. Then-either auto-recover, or wait for human approval.

- Context-aware: They don’t just shut down everything. They understand what’s normal for this agent.

- Reversible: You can override them, but only with multi-person approval.

- Self-learning: Over time, they adapt to what "normal" looks like for each agent.

Why This Matters Now

In 2023, most teams treated AI agents like magic boxes. Give it a prompt, get an answer. Now? They’re treated like nuclear reactors: monitored, contained, and capped with emergency controls. The companies winning with AI in 2026 aren’t the ones with the fanciest models. They’re the ones with:- Telemetry that shows why decisions happen

- Sandboxes that catch flaws before they go live

- Kill switches that stop damage before it spreads

Where to Start

If you’re just beginning:- Choose one high-risk agent. Not your chatbot. The one handling contracts or payroll.

- Enable OpenTelemetry tracing. Use the framework’s built-in support if it exists.

- Start tracking Tier 1 metrics: tool selection quality, action completion, context adherence.

- Build a sandbox. Run every new version there for 72 hours before production.

- Implement a kill switch. Start simple: pause the agent if it calls an external tool more than 3 times in 10 seconds.

Do I need a new tool to implement AI agent observability?

Not necessarily. If you’re using a modern AI agent framework like CrewAI, LangGraph, or AutoGen, it likely already supports OpenTelemetry. You just need to enable it. If you’re building from scratch, integrate with Langfuse or Datadog-they both accept OpenTelemetry data. The key is not the tool, but the standard: use OpenTelemetry’s AI agent semantic conventions so your data stays portable and consistent.

Can’t I just use my existing APM tool for AI agents?

No. Traditional APM tools track HTTP requests, database queries, and server CPU. They can’t see an AI agent’s reasoning steps, tool calls, or prompt versions. You’ll get metrics on latency, but zero insight into whether the agent made a smart decision. It’s like using a speedometer to diagnose a brain tumor. You need agent-specific telemetry.

What’s the difference between a sandbox and a kill switch?

A sandbox is a testing environment. It’s where you run agents safely before letting them loose. A kill switch is a real-time emergency brake. It stops an agent in production when it behaves dangerously. Sandboxes prevent problems. Kill switches contain them.

Is OpenTelemetry really the industry standard now?

Yes. By early 2026, over 70% of enterprise AI agent deployments use OpenTelemetry as their telemetry backbone. Frameworks like CrewAI, Pydantic AI, and Strands Agents all emit data in the OpenTelemetry format. Even vendors like Datadog and Langfuse built their AI observability features on top of it. It’s not optional anymore-it’s the baseline.

How do I know if my AI agent is behaving dangerously?

Watch for patterns: repeated tool failures, ignoring context, excessive retries, or sudden shifts in response style. If an agent that usually asks for approval suddenly skips it, that’s a red flag. If it cites sources that don’t exist, that’s a hallucination. Telemetry tools can auto-flag these. Start by logging every tool call and comparing it to historical patterns. Anomaly detection doesn’t need AI-it just needs data.

Next Steps

If you’re managing AI agents today:- Check your framework. Does it support OpenTelemetry? If not, ask why.

- Map out your top 3 high-risk agents. What happens if they fail?

- Set up a sandbox for at least one of them this week.

- Add a kill switch with a simple rule: pause if tool errors exceed 2 in 5 minutes.

Observability isn't about monitoring. It's about understanding the mind behind the output.

AI agents don't just compute. They interpret. They infer. They guess.

If you can't trace why it chose that tool, that prompt, that data point-you're not managing risk. You're ignoring it.

Telemetry is the only way to turn black boxes into transparent thinkers.

Simple as that.

Telemetry without sandboxes is like installing seatbelts but letting drivers test them on a cliff.

One company I worked with had an agent that ‘optimized’ payroll by cutting overtime-then doubled it because it misread a holiday calendar.

Found the flaw in sandbox. Fixed it before it hit prod.

OpenTelemetry made the trace readable. Sandboxing made it actionable.

Both are non-negotiable. One shows the wound. The other prevents the cut.

The paradigm shift is not technological-it’s philosophical.

We moved from asking ‘Did it work?’ to ‘Why did it think that?’

This demands rigor, not just tooling.

OpenTelemetry’s semantic conventions are the first universal language for AI cognition.

Without them, every team reinvents the wheel-and every failure is unique, unrepeatable, and untraceable.

Sandboxes are not delays. They are intellectual discipline.

Kill switches are not panic buttons. They are ethical safeguards.

AI agents are not tools. They are decision-makers.

And decision-makers require accountability.

Period.

Anything less is negligence dressed as innovation.

Let’s be real-this whole observability push is just Big Tech’s way of making you pay for enterprise-grade monitoring.

They sell you ‘OpenTelemetry’ then lock you into Langfuse dashboards.

And sandboxes? Ha. That’s just another layer of vendor lock-in wrapped in ‘best practice’.

Real teams run agents in production and fix them when they break.

Why waste time on telemetry when you can just audit logs after the fact?

And kill switches? Sounds like corporate fearmongering to justify more HR oversight.

AI is supposed to be autonomous. Not micromanaged.

They say ‘telemetry’ but what they really mean is surveillance.

Every thought. Every prompt. Every tool call logged.

Who owns that data? Who audits it?

And who’s to say the telemetry system itself isn’t being manipulated?

What if the kill switch is just a backdoor for corporate control?

What if the sandbox is a honeypot to train your agents to behave… obediently?

They call it safety.

I call it conditioning.

And I’m not the only one who’s seen this movie before.

First they monitor. Then they control. Then they decide what ‘correct reasoning’ looks like.

And we’re all just handing them the keys.