| Feature | Traditional SaaS | LLM Providers |

|---|---|---|

| Negotiation Focus | Price, Uptime, Implementation | Data Rights, Output Ownership, Bias |

| SLA Type | Static (e.g., 99.9% Uptime) | Dynamic (Accuracy, Drift, Explainability) |

| Liability | Capped at contract value | Tiered (Higher for bias/security) |

| Relationship | Transactional / Periodic Review | Continuous Partnership / Co-development |

Why Your Current Contracts Are Failing Your AI Strategy

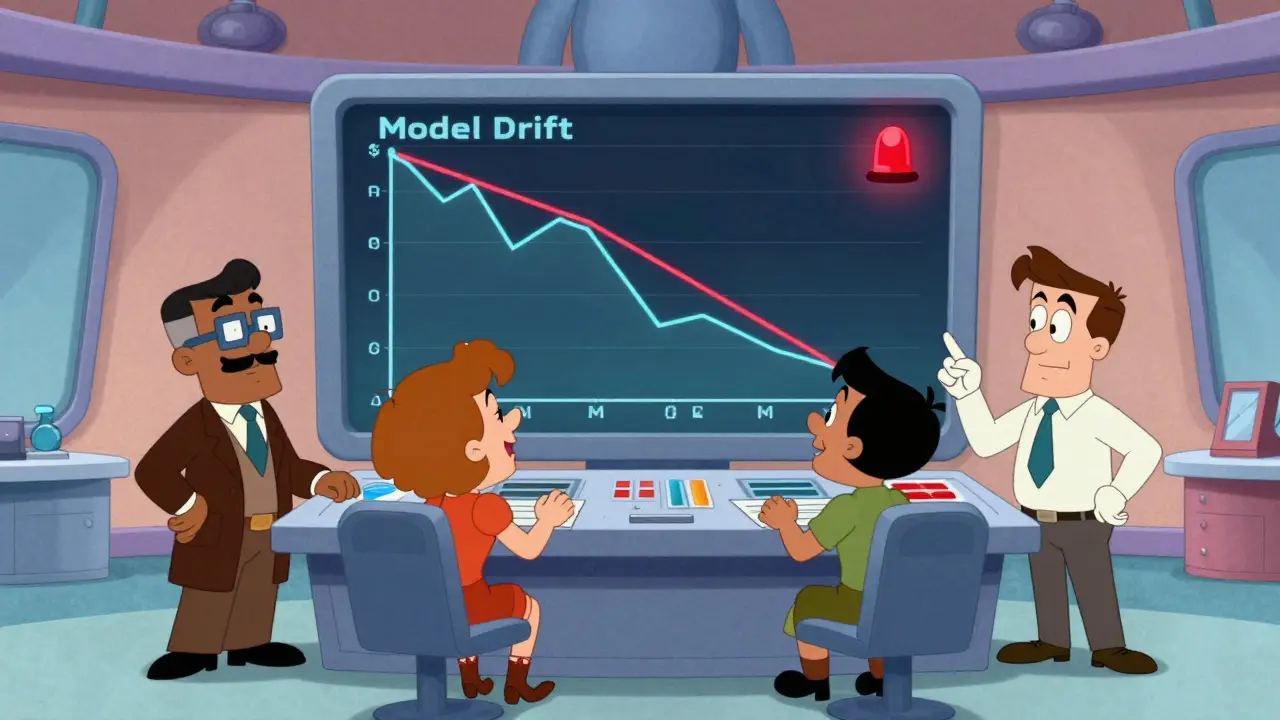

If you use your standard master service agreement (MSA), you're likely ignoring the biggest risks in AI. Most companies spend about 5-10% of their negotiation time on data rights when dealing with traditional software. With LLMs, that needs to jump to 30-40%. Why? Because the line between "your data" and "the model's training set" is incredibly blurry. One of the most dangerous gaps is the reliance on uptime SLAs. A model can be "up" (responding to pings) but completely broken in terms of quality. This is called model drift. Imagine a customer service bot that is 92% accurate in January but drops to 78% by October because the provider tweaked the underlying weights. If your contract only mentions uptime, the vendor has no incentive to fix that drop in quality, and you're stuck paying for a tool that actively misleads your customers.Critical Contractual Clauses for AI Governance

To protect your organization, you need to move beyond generic legal language and implement AI-specific guardrails. First, address the EU AI Act or similar regional mandates. If you're using AI for high-risk decision-making, your contract must explicitly define the level of human oversight required. You can't just hope the vendor is ethical; you need a contractual right to audit their human-in-the-loop processes. Then, look at liability. Standard indemnification is obsolete here. You need tiered liability structures. For example, a security breach should have uncapped liability, while damages resulting from biased outputs or misinformation should carry a higher cap-perhaps 3-5x the annual fees-than a simple service outage. This forces the provider to share the risk of the "black box" nature of their technology. Don't forget the exit strategy. Vendor lock-in is a massive threat in AI. If you build your entire workflow around a proprietary API and the vendor triples the price or changes the model's behavior, you're trapped. Your contract should include interoperability clauses and a pre-negotiated exit plan that guarantees secure data retrieval and a clear timeline for transitioning to another provider or an in-house solution.

Setting Performance Metrics That Actually Matter

Stop measuring success by whether the API responds. Instead, implement dynamic SLAs based on these three pillars:- Accuracy Thresholds: Define a minimum acceptable accuracy rate for your specific use case (e.g., 85-95%). If the model falls below this, it should trigger a remediation period.

- Drift Detection: Set a limit on monthly degradation-typically between 0.5% and 2%. This ensures the model doesn't slowly lose its edge.

- Explainability Standards: Require that a certain percentage of decisions (e.g., 80%) must be interpretable. You can't tell a regulator "the AI just said so."

The Operational Reality: Who Does the Work?

Many executives sign these deals and then wonder why the project fails. The problem is a lack of personnel. Legal teams aren't data scientists, and data scientists aren't procurement experts. You need a cross-functional "AI War Room" to manage these vendors. A typical enterprise setup requires:- AI-Specialized Legal Counsel: 2-3 attorneys who understand the nuances of AI copyright and liability.

- Model Validation Team: 3-5 data scientists dedicated to testing the vendor's claims and monitoring for drift.

- Procurement Specialists: 2-3 people who can manage the complex, evolving pricing models of AI tokens.

Choosing Your Tooling: CLM vs. AI-Specific Platforms

Traditional Contract Lifecycle Management (CLM) tools are struggling to keep up. While a standard CLM is great for storing PDFs, you need something that can integrate with your actual AI telemetry. This is why platforms like Sirion AI and Icertis are gaining ground. They use a hybrid approach: Large Language Models for broad contextual understanding of the contract, and "Small Data Models" for the high-precision tasks, like extracting exact pricing dates or checking if a specific liability cap was met. When evaluating these tools, look for an extensible user interface. You want a system that allows your team to adapt to changing business conditions without needing a six-month implementation project. If a tool takes half a year to integrate with your ERP, you've already lost the agility that AI was supposed to give you.What is the biggest risk in an LLM vendor contract?

The biggest risk is model drift. Many companies sign contracts that only guarantee uptime (SaaS style) but ignore performance quality. When the model's accuracy drops over time, the vendor isn't contractually obligated to fix it, leaving the business with a degraded tool they are still paying for.

How should I handle data ownership with an AI provider?

You must explicitly distinguish between your input data, the model's weights, and the generated output. Ensure the contract states that you own the outputs and that your input data cannot be used to train the vendor's base models without explicit, separate consent and compensation.

Are standard liability caps sufficient for AI?

No. Standard caps (1-2x annual fees) are usually too low for the types of risks AI introduces. You should push for tiered liability, with significantly higher caps for bias-related damages and uncapped liability for major security breaches or data leaks.

How do I prevent vendor lock-in?

Include interoperability clauses that require the vendor to provide data in a standard, portable format. Additionally, negotiate a clear exit strategy that defines exactly how your data will be returned and the timeline for transitioning to a new provider.

What metrics should be in an AI SLA?

Move beyond uptime to include model accuracy thresholds, monthly drift limits (e.g., less than 2% degradation), and explainability percentages (e.g., 80% of decisions must be interpretable by a human).