Rolling out Generative AI is a technology that creates new content like text, images, or code based on patterns learned from vast datasets without a solid oversight structure is like handing the keys to a high-performance car to someone who has never driven. The speed is there, but so is the crash risk. By mid-2025, nearly 80% of Fortune 500 companies had established some form of AI governance committee is a cross-functional team responsible for overseeing the ethical development, deployment, and operation of artificial intelligence systems within an organization. This wasn't just box-ticking; it was survival. With regulations like the EU AI Act tightening and internal risks spiking, these committees became the central nervous system for managing AI adoption.

If you are looking to build one, you aren't starting from scratch-you're building on a proven model. But getting the composition, the decision-making authority (RACI), and the meeting rhythm (cadence) wrong can turn your committee into a bureaucratic bottleneck instead of a strategic accelerator. Here is how to structure a governance body that actually works.

The Core Composition: Who Needs a Seat at the Table?

A common mistake is treating AI governance as solely an IT or legal problem. It isn't. Effective committees require a specific mix of perspectives to avoid blind spots. Research shows that committees lacking diverse technical expertise suffer 73% more algorithmic bias incidents. You need representation across seven critical domains to cover all bases.

- Legal: They own regulatory oversight. Their job is to ensure every use case complies with current laws, from data privacy acts to emerging AI-specific regulations.

- Ethics and Compliance: These members ensure alignment with broader organizational values and ethical standards, preventing reputational damage.

- Privacy: Focused specifically on lawful data processing. They review whether the data feeding the models respects user consent and anonymity requirements.

- Information Security and Architecture: They safeguard the systems. They look for vulnerabilities in how models are deployed and protected against attacks.

- Research and Development (R&D): Providing technical insights. They explain what the technology can and cannot do, bridging the gap between hype and reality.

- Product Management: Representing user needs. They ensure the AI solutions actually solve real problems for customers or employees.

- Executive Leadership: Setting strategic direction. Usually a C-suite sponsor, they provide the resources and authority needed to enforce decisions.

Without this full spectrum, you leave gaps. For instance, a committee heavy on legal but light on engineering might approve a tool that is legally sound but technically prone to "model drift," where the AI's performance degrades over time. OneTrust’s data indicates that adding engineering representation reduces these drift incidents by 52%.

Defining Authority with the RACI Framework

Having the right people is useless if they don't know who decides what. Ambiguity leads to delays. The RACI framework is a responsibility assignment matrix used in project management to clarify roles by categorizing them as Responsible, Accountable, Consulted, or Informed solves this by mapping out exactly who does the work, who signs off, who gives input, and who just gets the update.

In a typical generative AI governance setup, the roles break down like this:

| Role | RACI Status | Primary Responsibility |

|---|---|---|

| Committee Chair (C-Suite) | Accountable | Ultimate ownership of all decisions. The buck stops here. |

| Legal Team | Responsible | Executing compliance verification and drafting policies. |

| Privacy Officers | Consulted | Providing expert advice on data-related matters before approval. |

| Business Units | Informed | Receiving final approvals or rejections to proceed with implementation. |

The most critical element here is veto power. Experts like Dr. Rumman Chowdhury emphasize that effective committees must have explicit authority to halt deployments. If Legal or Security raises a red flag, the "Responsible" party needs the power to stop the project, not just suggest changes. Without this, the committee becomes advisory rather than governing, which defeats the purpose.

Choosing Your Governance Model

Not every organization operates the same way, and your committee structure should reflect your company size and culture. There are three main models emerging in the market, each with distinct trade-offs.

The Centralized Model features a single enterprise-wide committee with authority to approve or block all deployments. Adopted by about 42% of organizations, this approach offers superior control. Companies using this model report 92% fewer regulatory incidents. However, it requires 30% more executive time investment because all roads lead to Rome. It works best for high-risk applications where consistency is non-negotiable.

The Federated Model balances central oversight with business-unit-specific subcommittees. Used by 38% of enterprises, this is often the sweet spot for large corporations. Microsoft reported 44% faster deployment cycles with this model while maintaining compliance. It allows local teams to move quickly on low-risk projects while still adhering to global standards.

The Decentralized Model delegates primary governance to individual business units with only lightweight central coordination. While this looks efficient-showing 68% higher efficiency for low-risk apps-it carries significant danger. Analysis of 127 organizations showed this structure resulted in 57% higher compliance violations. Unless you have a mature culture of self-regulation, this model is risky for sensitive use cases.

Setting the Cadence: How Often Should You Meet?

A governance committee that meets too rarely loses touch with reality; one that meets too often becomes a burden. The solution is a tiered cadence.

- Executive Level (Quarterly): Convene every 90 days. Focus on strategy, high-level policy updates, and reviewing aggregate performance metrics. This is where the "big picture" lives.

- Operational Working Groups (Bi-weekly): Meet every 14 days. These groups assess individual use cases, review specific risk assessments, and handle day-to-day operational issues.

- Emergency/Time-Sensitive Approvals (Ad-hoc): Use electronic voting mechanisms for urgent decisions. Leading organizations enable approvals within 72-hour windows for critical initiatives that can't wait for a scheduled meeting.

This rhythm ensures that strategic alignment doesn't get bogged down by tactical details, while tactical teams don't have to wait months for guidance. JPMorgan Chase successfully reviewed 287 generative AI use cases with an 85% approval rate by using this structured approach, demonstrating that speed and safety aren't mutually exclusive.

Streamlining the Workflow: From Intake to Approval

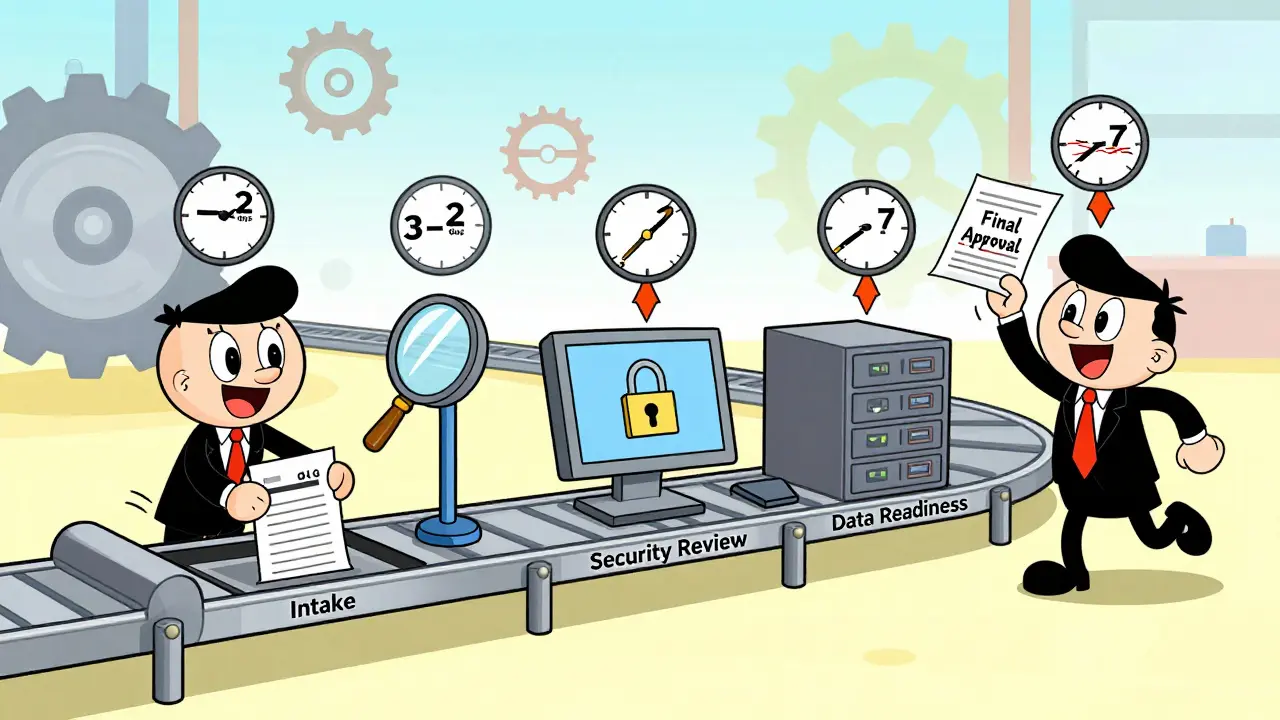

Even the best committee fails if the process is clunky. Standardized workflows are essential to prevent the "bureaucratic bottleneck" effect, which plagues 61% of organizations with unstructured processes. A typical effective workflow includes defined stages with clear timeboxes:

- Intake (2-5 business days): The business unit submits a proposal detailing the use case, data sources, and expected outcomes.

- Risk Tiering (3-7 days): The committee categorizes the risk level (Low, Medium, High). This step determines how rigorous the subsequent reviews will be.

- Privacy and Security Review (5-10 days): Deep dive into data handling and system vulnerabilities. This is where the "Consulted" parties in the RACI matrix do their heavy lifting.

- Data Readiness Checks (3-5 days): Verifying that the data quality and availability meet the model's requirements.

- Final Approval (2-3 days): The "Accountable" chair makes the final call based on the compiled feedback.

Total cycle time typically ranges from 15 to 25 days. For context, practitioners note that clear risk categorization frameworks can reduce approval times from 45 days to just 12. Speed comes from clarity, not shortcuts.

Overcoming Common Implementation Challenges

Building the committee is only half the battle; keeping it effective is the other. Two major hurdles stand in the way: skill gaps and engagement.

First, the learning curve is steep. Non-technical members need 20-25 hours of specialized training to effectively evaluate generative AI risks. Technical members need 15-20 hours to understand regulatory requirements. Don't assume existing knowledge applies. Invest in this training upfront. Second, maintaining engagement is hard. 59% of organizations struggle with committee fatigue. To combat this, focus on outcomes rather than pure oversight. Gartner found that outcome-focused committees accelerated AI adoption by 2.3x. Frame meetings around enabling business value safely, not just blocking risks.

Finally, anticipate friction. Cross-departmental conflicts occur in 68% of implementations. Establish clear escalation paths early. If Legal disagrees with Product, who breaks the tie? The RACI chart should answer this immediately. Having an executive sponsor present in 93% of successful implementations provides the necessary weight to resolve these disputes quickly.

How long does it take to establish an effective AI governance committee?

Establishing an effective committee typically requires 8-12 weeks of preparatory work. This includes stakeholder mapping, charter development, and role definition. Larger enterprises with over 10,000 employees may need 30% more time due to increased complexity and stakeholder count.

What is the difference between centralized and federated AI governance models?

A centralized model uses a single enterprise-wide committee for all approvals, offering stricter control but requiring more executive time. A federated model combines central oversight with business-unit subcommittees, balancing speed and compliance. Federated models often see faster deployment cycles while maintaining strong governance.

Why is the RACI framework important for AI governance?

The RACI framework clarifies who is Responsible, Accountable, Consulted, and Informed for each decision. This prevents ambiguity, ensures that critical roles like Legal and Privacy are consulted, and guarantees that a single person (the Accountable chair) has the authority to make final calls, including vetoes.

How often should an AI governance committee meet?

A tiered approach is recommended. Executive-level committees should meet quarterly to review strategy and policy. Operational working groups should meet bi-weekly to assess specific use cases. Emergency approvals should be handled via ad-hoc electronic voting within 72 hours.

What are the key roles required in a generative AI governance committee?

Effective committees include representatives from Legal, Ethics and Compliance, Privacy, Information Security, Research and Development, Product Management, and Executive Leadership. This cross-functional mix ensures comprehensive coverage of regulatory, ethical, technical, and business risks.

Can a decentralized AI governance model be effective?

While decentralized models show higher efficiency for low-risk applications, they carry significantly elevated risk exposure. Studies show a 57% higher rate of compliance violations in decentralized structures compared to centralized ones. They are generally not recommended for sensitive or high-impact AI use cases.

How much training do committee members need?

Non-technical members typically require 20-25 hours of specialized training to evaluate AI risks effectively. Technical members need 15-20 hours to understand regulatory and compliance requirements. This investment is crucial to prevent blind spots and ensure informed decision-making.

What is the typical timeline for an AI use case approval?

With a standardized workflow, the total cycle time for approval ranges from 15 to 25 days. This includes intake (2-5 days), risk tiering (3-7 days), privacy/security review (5-10 days), data readiness checks (3-5 days), and final approval (2-3 days).

honestly this is just common sense but ppl forget it. u need legal and tech on the same page or its gonna be a mess. i seen too many projects die because nobody knew who was in charge.

another corporate buzzword salad post. 'governance committee' sounds like something from a dystopian novel not a tech strategy. why do we need more meetings to slow down innovation? typical bureaucracy trying to strangle progress with red tape and pointless paperwork. nobody reads these things anyway.

I think the RACI matrix is actually super helpful here. It stops people from blaming each other when things go wrong. Having clear roles makes everything smoother.

The problem, of course, is that most companies treat ethics as an afterthought rather than a core component; this is morally indefensible! Furthermore, the lack of rigorous oversight leads to catastrophic failures which could have been prevented by proper governance structures. One must ask: are we truly valuing human dignity above profit margins? The answer is often a resounding no! We must demand better standards, not just for compliance but for our collective conscience. Ignoring these risks is simply negligent behavior on the part of leadership teams everywhere!

the whole idea of a centralized model is terrifyingly authoritarian and completely ignores the nuance of local context. you cannot govern complex systems with a blunt instrument. it creates bottlenecks that stifle creativity and lead to stagnation. the federated approach is the only way to maintain agility without sacrificing control entirely. otherwise you end up with a bloated bureaucracy that does nothing but block progress.

This is a great guide. I really like the part about training non-technical members. It shows respect for everyone's role in the process. Good luck to anyone setting this up!

In India we see similar challenges with data privacy laws. The GDPR influence is strong here too. Cross-functional teams work best when there is trust between departments. Legal and Tech must talk early.

We should be focusing on American AI dominance not getting bogged down by EU regulations. The US needs to move fast and break things. These committees are just excuses for lazy management to avoid taking responsibility for real innovation. Let our engineers build what they want without so much interference from bureaucrats who don't understand technology.

The tiered cadence is key. You cant meet weekly on strategy and daily on ops. It burns people out. Keep execs quarterly and working groups bi-weekly. That balance keeps momentum without killing productivity. Also the veto power part is critical for security teams.

It is indeed imperative to establish a robust framework for artificial intelligence governance. The inclusion of diverse perspectives within the committee structure is not merely advisable but essential for comprehensive risk mitigation. Organizations that fail to implement such rigorous oversight mechanisms may find themselves vulnerable to significant reputational and financial liabilities. Therefore, adherence to these established protocols is recommended for optimal operational integrity.