Imagine asking your company’s internal AI assistant a simple question about quarterly sales figures. Instead of giving you the numbers, it accidentally spills confidential customer records or unreleased product strategies into the chat window. This isn’t just a hypothetical nightmare; it’s a real risk that happens when organizations rush to adopt Retrieval-Augmented Generation (RAG) is a framework combining retrieval systems with generative language models to improve output accuracy by accessing external knowledge sources without proper safeguards.

In early 2024, Thales reported a staggering 73% increase in RAG security incidents among Fortune 500 companies alone. The problem? Most teams focus on making the AI sound smart, not keeping the data safe. If you’re building or managing an AI workflow that touches private documents, you need to treat security as a core feature, not an afterthought. Here is how to protect your sensitive information from ingestion to generation.

The Hidden Vulnerabilities in Your RAG Pipeline

RAG works by pulling relevant documents from a vector database and feeding them to a large language model (LLM) to generate an answer. It sounds straightforward, but every step in this chain introduces potential leak points. Palo Alto Networks found that 68% of unsecured RAG implementations leaked sensitive data during penetration testing. Why? Because standard security tools weren’t built for this specific architecture.

First, there’s the ingestion phase. When you upload thousands of PDFs, emails, or spreadsheets into your system, you often assume they are clean. But without pre-ingestion discovery, sensitive data like social security numbers or medical records might slip through undetected. Then comes the storage layer. Vector databases store numerical representations (embeddings) of your text. If these embeddings aren’t encrypted or access-controlled, anyone with database access could reverse-engineer the original content.

Finally, there’s the generation phase. Even if your storage is secure, the LLM itself can be tricked. Attackers use techniques like prompt injection to bypass safety filters and extract hidden context. A study by Zhang et al. at ACL 2024 showed that untargeted attacks could extract 37% of sensitive information from unsecured systems. You need defenses at every single layer.

Seven Layers of Defense for Secure RAG

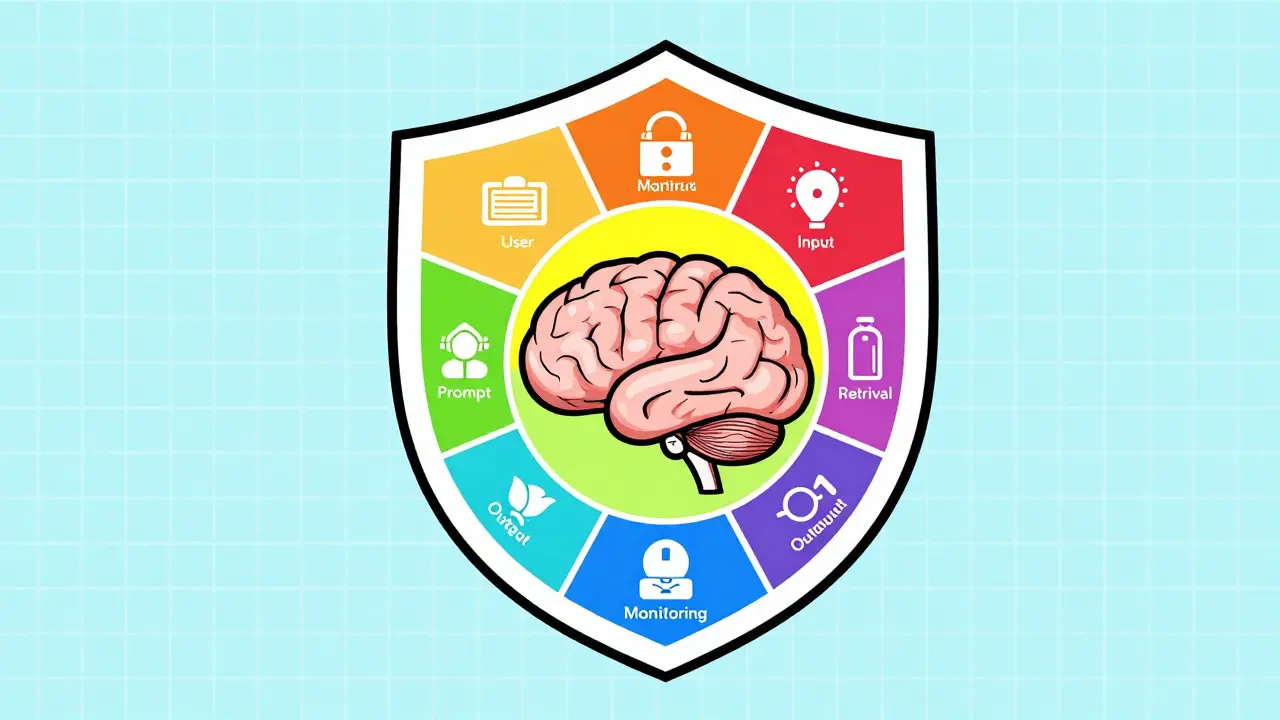

To build a robust shield around your AI workflows, you should implement a multi-layered security strategy. The USC Security Institute outlines seven critical layers that cover the entire lifecycle of a query:

- User Layer: Strict authentication and authorization ensure only approved personnel can interact with the system.

- Input Layer: Sanitization filters strip out malicious code or harmful instructions before they reach the model.

- Prompt Layer: Structured templates with guardrails prevent users from deviating into unsafe territory.

- Retrieval Layer: Secure vector stores with Role-Based Access Control (RBAC) ensure users only retrieve documents they are permitted to see.

- Model Layer: Constraints on the LLM itself limit what kind of information it is allowed to generate.

- Output Layer: Post-processing checks scan the final response for accidental data leaks before showing it to the user.

- Monitoring Layer: Anomaly detection systems watch for unusual access patterns in real-time.

Implementing all seven layers might sound heavy, but it’s necessary. For example, metadata filtering in the Retrieval Layer is crucial. If a junior employee asks a question, the system must filter out documents tagged “Confidential” before they even reach the LLM. AWS Bedrock documentation notes that while this filtering can reduce query throughput by 8-12% in high-volume environments, the trade-off is essential for compliance.

Choosing Between Commercial and Open-Source Solutions

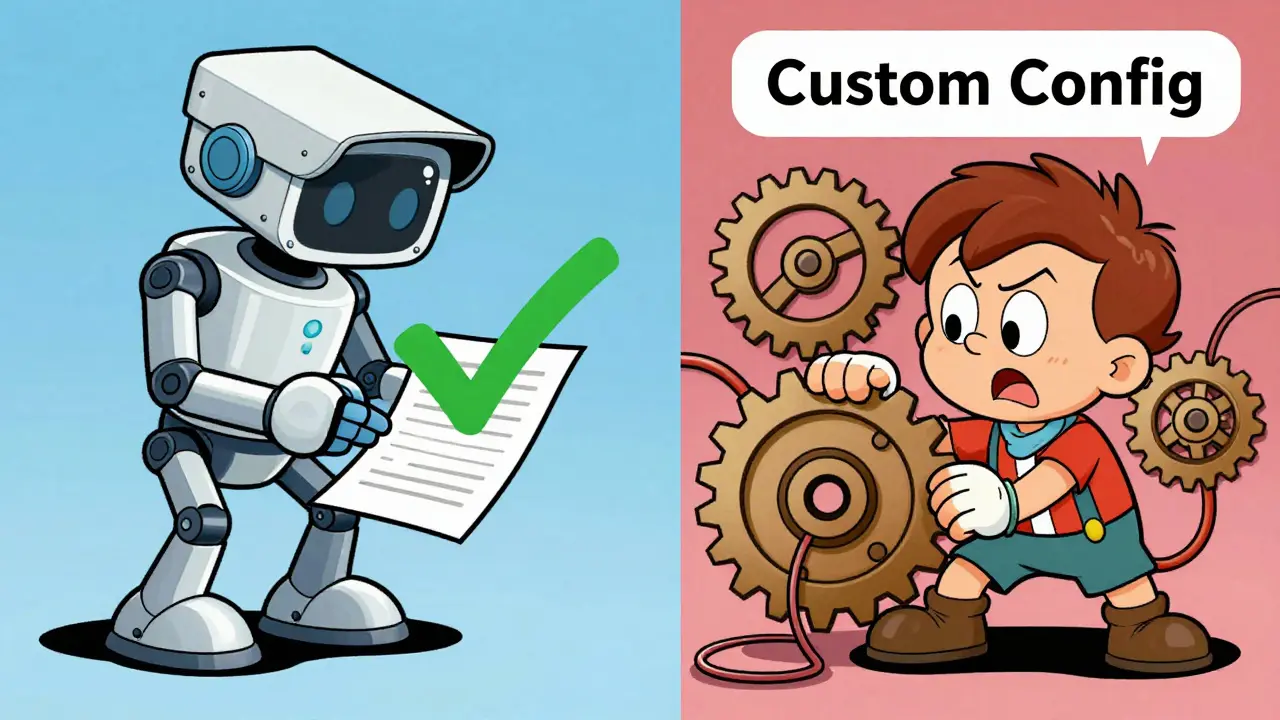

You have two main paths for securing your RAG infrastructure: commercial platforms or open-source tools. Each has distinct advantages depending on your team’s size and budget.

| Feature | Commercial Solutions (e.g., Thales CPL) | Open-Source Tools (e.g., LangChain Guard) |

|---|---|---|

| Data Discovery Speed | 1.2 million files/hour | 350,000 files/hour |

| Annual Cost Estimate | $28,500+ | $8,200 (including hosting/maintenance) |

| Pre-Ingestion Scanning | Comprehensive, automated | Requires custom configuration |

| Support & Compliance | Built-in GDPR/HIPAA reports | Manual audit trails needed |

| Latency Impact | 15-25ms added | Variable, often higher if poorly optimized |

Commercial solutions like Thales CipherTrust is an enterprise-grade data security platform offering automated discovery, classification, and encryption for sensitive data provide speed and ease of use. They scan massive datasets quickly and offer ready-made compliance reports. However, they come with a price tag. Open-source alternatives give you flexibility and lower upfront costs, but they demand significant engineering effort to maintain and secure. If you lack dedicated AI security engineers, the commercial route is usually safer.

Real-World Performance and Trade-offs

Security always adds some friction. In RAG systems, this friction shows up as latency. Fortanix’s technical analysis indicates that tokenizing and encrypting vector embeddings adds 12-18ms of overhead per query. While this seems small, it compounds in high-frequency applications. A chatbot handling hundreds of queries per second needs to balance speed against safety.

Another challenge is classification drift. Documents change over time. A file marked “Public” today might contain sensitive client data next month. JPMorgan Chase reported a 22% failure rate in identifying newly created sensitive documents during periods of market volatility because their static classification rules couldn’t keep up. To combat this, implement continuous scanning jobs that re-evaluate document sensitivity periodically, rather than relying solely on initial ingestion tags.

Also, consider the human element. Complex Role-Based Access Control (RBAC) configurations frustrate users. AWS Bedrock reviews show that 41% of negative feedback relates to difficult RBAC setup. Simplify permissions where possible. Use group-based policies instead of individual ones, and automate policy enforcement where technology allows. AWS recently released automated policy features that reduced misconfiguration risks by 63%, proving that usability and security can coexist.

Compliance and Regulatory Pressures

The regulatory landscape is tightening fast. The EU AI Act, effective August 2025, mandates “appropriate technical and organizational measures” for AI privacy risks. Similarly, California’s Privacy Rights Act amendments now specifically address AI data handling. Ignoring these isn’t an option.

In healthcare, the stakes are highest. HIPAA-compliant RAG implementations reduced protected health information (PHI) exposure by 97% in a Mayo Clinic case study. How? By using strict metadata filtering and end-to-end encryption. Financial services follow close behind, with 63% of enterprises adopting formal RAG security protocols. If you operate in regulated industries, your RAG security strategy must align with existing compliance frameworks. Don’t build a siloed security solution; integrate it with your broader governance structure.

Step-by-Step Implementation Guide

Getting started doesn’t require a complete overhaul. Follow this five-phase process adapted from industry best practices:

- Data Discovery: Use tools like CipherTrust or open-source scanners to identify sensitive content in your existing repositories. Tag PII, PHI, and financial data clearly.

- Policy Definition: Decide what happens to each data type. Should it be masked? Tokenized? Fully encrypted? Define clear rules for each category.

- Integration: Connect your security policies to your vector database (Pinecone, Weaviate, or Amazon OpenSearch). Ensure RBAC is enforced at the retrieval level.

- Monitoring Setup: Deploy anomaly detection to flag unusual query patterns. Set thresholds, such as limiting users to 150 queries per hour to prevent abuse.

- Validation: Run penetration tests. Try to break your own system using prompt injection techniques. Fix any gaps before going live.

This process typically takes 3-4 weeks for organizations already using cloud ecosystems like AWS, but hybrid environments may take 8-10 weeks. Start small with a pilot project to refine your policies before scaling across the entire organization.

Future Trends and Skills Gap

The field is evolving rapidly. By Q3 2026, expect integration with confidential computing, which processes data in encrypted memory states. Additionally, standardized RAG security APIs are being developed by alliances including major tech firms, which will simplify cross-platform compatibility.

However, talent remains a bottleneck. LinkedIn’s 2025 Workplace Learning Report shows a 210% year-over-year growth in demand for embedding security skills. Many organizations struggle to hire staff who understand both AI mechanics and traditional cybersecurity. Invest in training your current team. Focus on vector database administration and compliance framework knowledge, as these are cited as critical skills by hiring managers.

What is the biggest security risk in RAG systems?

The biggest risk is data leakage through unauthorized retrieval. If your vector database lacks proper access controls, the LLM might pull sensitive documents that the user shouldn't see, leading to accidental exposure of private information in the generated response.

How much latency does RAG security add?

Properly implemented security layers typically add 15-25ms of latency per query. Encryption and tokenization contribute 12-18ms of this overhead. While noticeable in high-frequency applications, this trade-off is generally accepted for the enhanced protection it provides.

Is open-source RAG security enough for enterprises?

It depends on your resources. Open-source tools are cheaper but require significant engineering effort to configure and maintain securely. Commercial solutions offer faster deployment and built-in compliance reporting, making them better suited for teams lacking specialized AI security expertise.

How do I prevent prompt injection attacks?

Use structured prompt templates with strict guardrails at the Input and Prompt layers. Implement input sanitization to block malicious commands, and use post-processing checks to verify that outputs don't contain unexpected sensitive data. Regular penetration testing helps identify vulnerabilities.

Which vector databases support RAG security best?

Major vector databases like Pinecone, Weaviate, and Amazon OpenSearch all support security integrations. Compatibility is generally high, with vendors like Thales reporting 100% compatibility with both self-managed and SaaS options. The key is ensuring you enable Role-Based Access Control (RBAC) and encryption features within your chosen platform.