When you hear that a new AI model has 100 billion parameters, it’s easy to think it’s just bigger - like a larger hard drive or more RAM. But it’s not. What’s really happening is something deeper, more mathematical, and surprisingly predictable. This is where scaling laws come in. They’re not guesses. They’re patterns - baked into how neural networks learn - that show us exactly how performance improves as models grow.

What Scaling Laws Actually Measure

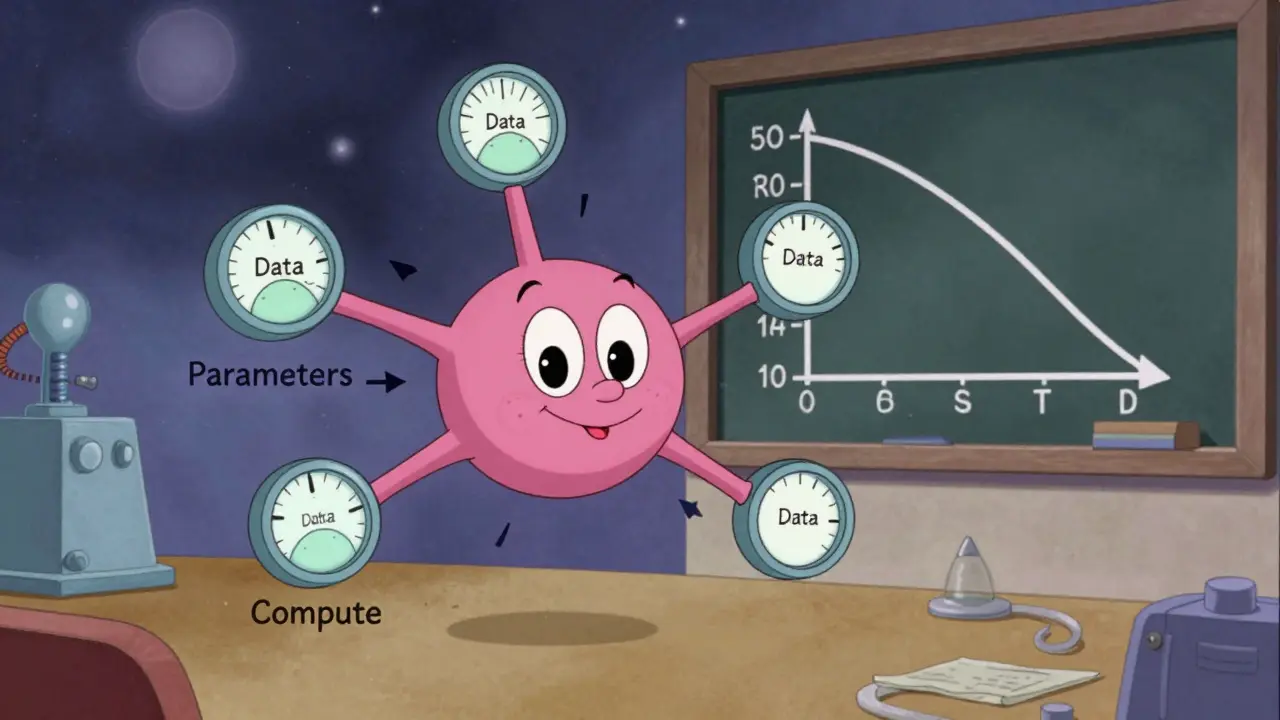

Scaling laws aren’t about making models bigger for the sake of it. They’re about finding a formula that links three things: how many parameters a model has, how much data it’s trained on, and how much compute power is used. And when you plot these against performance, you don’t get chaos. You get a clean, smooth curve.Research from 2020 showed that loss - a measure of how wrong a model’s predictions are - drops predictably as you increase any of those three factors. In fact, the relationship spans over seven orders of magnitude. That means if you train a model with 10 million parameters and another with 10 billion, the performance difference isn’t random. It follows a power law. Double the parameters? You don’t get double the accuracy. You get a predictable, mathematically defined improvement.

This isn’t just theory. It’s what let teams predict GPT-4’s performance before it was even built. By training smaller models, measuring their loss, and fitting a power law, researchers could estimate how a 100x larger model would perform. That saved millions in trial-and-error training costs.

Why More Parameters = Better Performance

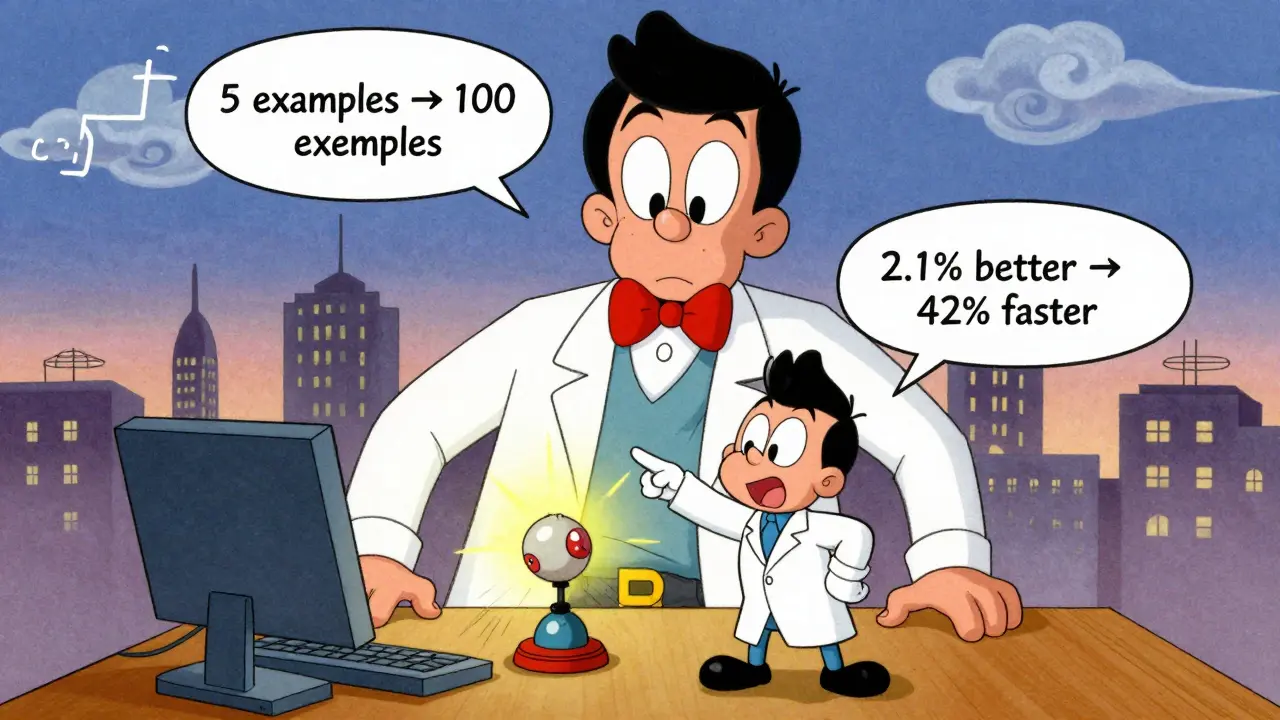

Parameters are the numbers inside a neural network that get adjusted during training. Think of them like tiny dials that store knowledge. More dials mean more room to remember patterns, relationships, and context.But it’s not just memory. Larger models use information more efficiently. GPT-3 showed this clearly: as its parameter count grew, its ability to learn from just a few examples - called few-shot learning - improved smoothly. Smaller models needed hundreds of examples to get the same result. Larger ones got it from five. That’s not luck. It’s scaling in action.

Why? Because with more parameters, the model can represent more complex functions. It doesn’t just memorize. It generalizes. It finds hidden structures in language, code, or images that smaller models simply can’t capture. And when you add enough training data, those structures become clearer. The model doesn’t just get better - it gets smarter in a way that scales linearly with size.

It’s Not Just Parameters - But They’re the Key

You might think: if data and compute matter too, why focus on parameters? Because parameters are the most controllable variable.Training on more data means collecting, cleaning, and filtering trillions of tokens - expensive and slow. More compute means more GPUs, more electricity, more time. But increasing parameters? You can do that by changing a single number in the model architecture. It’s like turning up the volume on a speaker instead of buying a whole new sound system.

Recent studies from 2025 show that scaling laws for parameters plus tokens (the combination of model size and training data) are just as reliable as scaling laws based purely on compute. That means if you want to improve performance, you have options - but parameters give you the biggest bang for your buck.

Take the Panda-1B model, trained on 100 billion tokens. It outperformed LLaMA-3.2-1B by 2.1% across tasks. The Panda-3B version didn’t just beat it - it did 42% better on inference speed while being more accurate. That’s not magic. That’s scaling law optimization.

Scaling Laws Are Changing How Models Are Built

Early scaling laws treated architecture as fixed. You picked a transformer, trained it, and watched how performance changed. Now, it’s flipped. Researchers use scaling laws to choose the architecture.A 2025 study trained over 200 models with sizes from 80 million to 3 billion parameters and training data from 8 billion to 100 billion tokens. They didn’t just measure performance. They used the data to build conditional scaling laws - models that predict not just how performance changes with size, but what architecture works best at each scale.

The result? Models designed using these laws beat standard architectures under the same training budget. They weren’t just slightly better. They were faster, more accurate, and more efficient. That’s the power of using scaling laws not just to predict, but to design.

What This Means for Real-World AI

Scaling laws aren’t just for labs. They’re changing how companies invest in AI.Before scaling laws, training a new model was a gamble. You spent millions, waited months, and hoped it worked. Now, you can run a few small tests, fit a curve, and know with high confidence whether a 10x larger model will deliver value. That’s how you justify spending $50 million on training - because you can predict the return.

It also changes resource allocation. Should you buy more GPUs? Or collect more data? Or build a larger model? Scaling laws give you the answer. For most teams, increasing parameters is the most efficient path - especially if you already have enough data.

Even better, recent work shows that just three hyperparameters explain nearly all variation in model behavior. That means you don’t need a team of PhDs to use scaling laws. A small AI team with access to a few smaller models can make smart scaling decisions.

The Limits and the Future

Scaling laws aren’t magic. They don’t guarantee success. If you don’t have enough data, more parameters won’t help. If your training is noisy, the curve breaks. And there are still limits - we don’t yet know how far we can push these laws before models plateau or behave unpredictably.But here’s the surprising part: research now shows that scaling laws that work for giant models also work for small ones. That’s new. Earlier theories assumed small and large models operated under different rules. They don’t. The same mathematical relationships hold across scales. That’s huge. It means we can use small, cheap experiments to predict what happens at massive scale.

Looking ahead, scaling laws are merging with architecture design, training techniques, and even hardware. We’re moving from "bigger is better" to "bigger is better, and here’s exactly how to build it." That’s not just progress. It’s a new way to build AI - one based on math, not guesswork.

Do scaling laws apply to all types of generative AI, or just language models?

Scaling laws were first discovered in language models, but they’ve been confirmed in image, audio, and video generation models too. The same power-law relationships hold: more parameters and more training data lead to predictable improvements in quality and efficiency. While the exact exponents vary by modality, the core principle - that performance scales smoothly with size - is universal across generative AI.

Can you scale parameters forever?

No - there are practical limits. Training models with trillions of parameters becomes too expensive, too slow, and too energy-intensive. There are also diminishing returns: after a certain point, adding more parameters gives you smaller and smaller gains. But we’re still far from that limit. Current models are in the hundreds of billions; the next leap could be in the trillions. The real question isn’t whether we can scale further, but whether we can scale smarter.

Do scaling laws mean we don’t need better algorithms anymore?

Not at all. Scaling laws help you get more out of existing architectures, but breakthroughs in algorithms - like attention mechanisms, sparse activation, or new training objectives - still drive major leaps. Scaling is about optimization. Innovation is about transformation. The best results come when you do both: use scaling to maximize performance, and new algorithms to redefine what’s possible.

How do scaling laws help startups with limited budgets?

They turn guesswork into strategy. A startup can train five small models with different parameter counts, measure their performance, and fit a scaling law. From that, they can predict how a 10x larger model would perform - without ever training it. This lets them decide whether to invest in scaling up, or if they should focus on data quality or fine-tuning instead. It’s the difference between spending $2 million blindly or $200,000 with confidence.

Is there a downside to relying on scaling laws?

Yes. Scaling laws assume your training data is clean, representative, and well-distributed. If your data is biased, toxic, or repetitive, scaling just makes those problems bigger and more efficient. Also, scaling doesn’t fix poor evaluation methods. A model can look great on standard benchmarks but fail in real-world use. Scaling laws predict performance on a metric - not whether that metric matters.

It’s wild how we’ve gone from "bigger is better" to "bigger is predictable." Scaling laws feel like the universe whispering its secrets through gradients and loss curves. We used to think intelligence was this mystical spark - now it’s just a function of parameters, data, and compute. Not less magical, just… mathematically elegant.

It makes me wonder if consciousness itself is just a scaling artifact. Not that we’ll ever prove that. But the fact that a model with 100B parameters can write a poem that moves people - while a 1B model just repeats clichés - suggests something deeper than pattern matching. Maybe we’re not building AI. Maybe we’re tuning a radio to hear intelligence humming in the background.

Yeah sure, scaling works. But every time someone says "it’s just math," I think of the last 3 startups that blew $2M on scaling a trash dataset and ended up with a model that thinks "climate change is a hoax" and writes poetry in Shakespearean English about Bitcoin.

Parameters don’t fix garbage in, garbage out. You can scale a racist model 1000x and it’ll just be a *really* confident racist.

Eric, you’re not wrong - and that’s why this matters so much. Scaling isn’t a magic wand, it’s a mirror. It doesn’t create intelligence. It amplifies what’s already there.

That’s why data quality isn’t a side note - it’s the foundation. A model with 1 trillion parameters trained on curated, ethical, diverse data could revolutionize education, medicine, accessibility. One trained on scraped Reddit threads and conspiracy blogs? It’ll just be a louder echo of our worst biases.

But here’s the hopeful part: scaling laws let us test this *before* we spend millions. A startup can train 5 small models on different datasets and see which one scales cleanly. That’s power. That’s agency. We’re not just building AI anymore - we’re choosing what kind of world we want it to reflect.

Just wanted to add - the fact that scaling laws hold across modalities is huge. I’ve been working with audio models and yeah, same thing. Double the params, double the clarity. Triple the data, triple the nuance in tone and emotion.

It’s not just language. It’s music, speech, even environmental soundscapes. The math doesn’t care if it’s pixels or waves - it just cares about structure. That’s beautiful. And it means we can borrow insights from one domain to improve another. Cross-pollination FTW.

Scaling laws reveal a profound truth: intelligence emerges not from complexity alone but from the alignment of scale with structure. The uniformity of power-law behavior across architectures and modalities suggests an underlying principle of information compression and representation efficiency. We are not merely engineering systems - we are observing natural laws of learning.

Yet this also implies a responsibility. To scale without ethical grounding is to amplify harm with mathematical precision. The curve does not distinguish between wisdom and noise. It only responds to quantity. The burden of meaning remains ours.