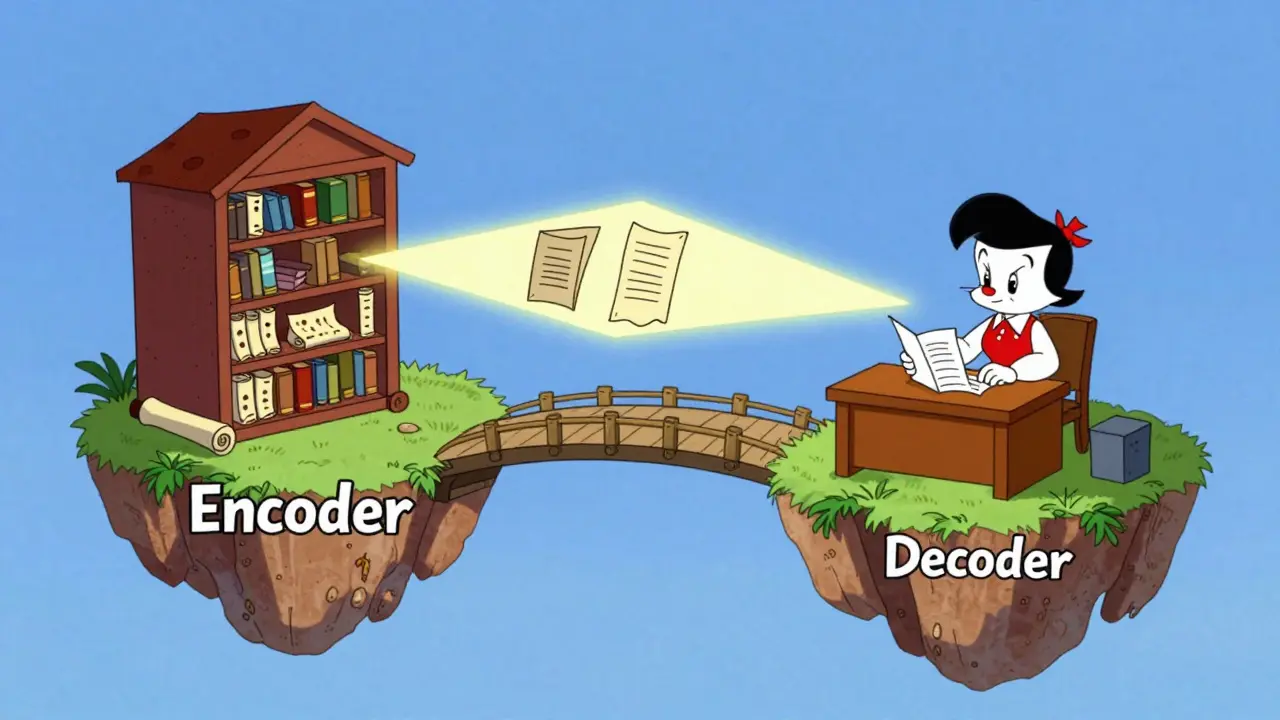

Ever wonder how a translation tool knows exactly which word in a Spanish sentence corresponds to a specific word in English? It isn't just guessing based on the overall vibe of the text. There is a specific architectural bridge called Cross-Attention is a specialized attention mechanism within encoder-decoder transformer architectures that allows the decoder to condition its output on the encoder's processed information. Without this bridge, a decoder would be like a writer trying to summarize a book they've never actually read-they might know how to write sentences, but they have no source material to reference.

The Core Difference: Self-Attention vs. Cross-Attention

To get why cross-attention matters, we first have to look at its sibling, self-attention. In a standard transformer, self-attention is used to understand the internal relationships within a single sequence. If you're analyzing the sentence "The cat sat on the mat because it was tired," self-attention helps the model figure out that "it" refers to the "cat." Both the encoder and the decoder use self-attention to build a coherent understanding of their respective sequences.

Cross-attention is different because it connects two separate worlds. While self-attention looks inward, cross-attention looks across. It allows the decoder to reach back into the encoder's memory and pull out the specific pieces of information needed for the current token being generated. This is the essence of conditioning-the model doesn't just generate text in a vacuum; it generates text conditioned on the source input provided by the encoder.

The Anatomy of a Decoder Layer

In a classic Encoder-Decoder Transformer, the decoder isn't just a mirror of the encoder. It has a very specific three-step process in every single layer to ensure the output is both fluent and accurate. The order of these operations is non-negotiable for the model to work correctly.

- Masked Self-Attention: First, the decoder looks at the tokens it has already generated. The "masking" part is crucial here; it prevents the model from "cheating" by looking at future tokens during training.

- Cross-Attention: This is where the magic happens. The decoder takes its current state and asks the encoder, "Based on what I've written so far, what parts of the original input are most relevant right now?"

- Feed-Forward Network: Finally, the combined information from both self-attention and cross-attention is passed through a position-wise network to refine the representation before moving to the next layer.

The Math Under the Hood: Queries, Keys, and Values

If we peel back the layers, cross-attention relies on three learned projection matrices: $W_Q$, $W_K$, and $W_V$. The critical shift here is where these vectors come from. In self-attention, all three come from the same place. In cross-attention, they are split.

The Queries (Q) are generated from the decoder's state. Think of the query as a search term. The Keys (K) and Values (V) are derived from the encoder's output. The keys are like the labels on a filing cabinet, and the values are the actual documents inside.

The model calculates a score by taking the dot product of the query and the keys, scaling it by $1/\sqrt{d_k}$ to keep the numbers from exploding (which would crash the gradients during training), and applying a softmax function. This results in a probability distribution that tells the model exactly how much weight to give to each part of the encoder's output. The final output is a weighted sum of the value vectors.

| Feature | Self-Attention | Cross-Attention |

|---|---|---|

| Source of Q | Current sequence | Decoder state |

| Source of K, V | Current sequence | Encoder output |

| Purpose | Internal context/syntax | External conditioning |

| Location | Encoder & Decoder | Decoder only |

Real-World Use Case: Machine Translation

In machine translation, cross-attention acts as a dynamic alignment tool. Imagine translating "The big red dog" into French. When the decoder is ready to generate the word "rouge" (red), the cross-attention mechanism will show a high activation score for the word "red" in the encoder's output. It effectively creates a map between the source and target languages on the fly.

One practical challenge here is handling Padding Masks. Since input sentences vary in length, we add padding tokens to make them uniform. If the model attended to these empty tokens, it would waste computational resources and introduce noise. To fix this, the mechanism applies a mask that sets the attention scores of padding tokens to a very large negative number (like -1e9) before the softmax, effectively making them invisible to the decoder.

Expanding to Multimodal Learning

Conditioning isn't just for text-to-text tasks. Cross-attention is the secret sauce for Multimodal Learning, where a model integrates different types of data, like images and text. In an image-captioning model, the encoder might be a Vision Transformer (ViT) processing an image, while the decoder is a text-generating transformer.

There are two main ways developers implement this in libraries like Hugging Face Transformers:

- Concatenation: The outputs from different encoders (e.g., one for text, one for images) are concatenated into one long sequence of key-value pairs. The decoder attends to this entire "knowledge pool" at once.

- Separate Layers: The model uses distinct cross-attention layers for each modality. This gives the model finer control, allowing it to decide, for example, to rely more on the image encoder for visual descriptors and more on a text encoder for grammatical structure.

Avoiding the Bottleneck

Why not just compress the entire encoder output into a single vector and give that to the decoder? Early sequence-to-sequence models did exactly that, and it was a disaster for long sentences. This is known as the "information bottleneck." When you squash a 50-word sentence into one fixed-size vector, you lose the nuances of the beginning and end of the sentence.

Cross-attention solves this by allowing the decoder to look at the full sequence of encoder hidden states. Instead of one summary, it has access to a detailed map. This allows the model to scale to much larger contexts and maintain a high level of precision, regardless of the input length.

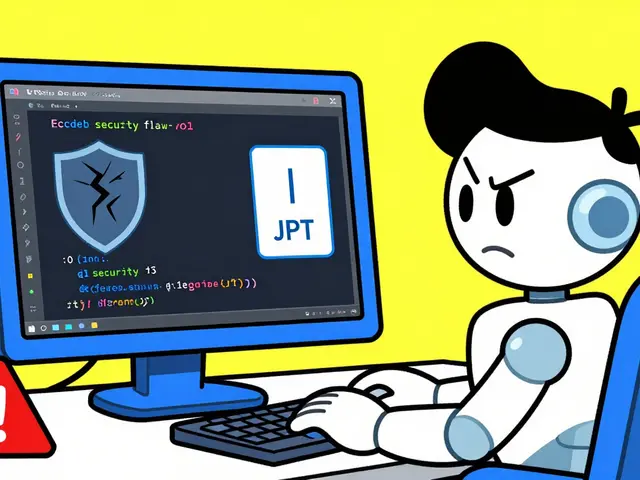

Can a Transformer work without cross-attention?

Yes. Decoder-only models, like GPT-4, do not use cross-attention because they don't have a separate encoder. They rely entirely on masked self-attention. However, they cannot be "conditioned" on a separate encoder output in the same way; instead, they take the prompt and the generated text as a single continuous sequence.

Why is the scaling factor 1/sqrt(d_k) necessary?

As the dimensionality (d_k) of the keys and queries increases, the dot product tends to grow very large in magnitude. This pushes the softmax function into regions where the gradient is extremely small, leading to the "vanishing gradient" problem. Scaling the dot product keeps the values in a range where the softmax remains sensitive, ensuring the model can actually learn.

Where exactly does cross-attention sit in the architecture?

It is located exclusively within the decoder layers. Specifically, it sits between the masked self-attention sub-layer and the feed-forward network sub-layer. This ensures the model first understands its own generated context before seeking relevant information from the encoder.

Does cross-attention increase inference time?

It adds computational overhead compared to a simple self-attention layer because the decoder must perform matrix multiplications against the entire encoder output sequence. However, because these operations are highly parallelizable on GPUs, the impact is manageable compared to the massive jump in quality it provides for translation and multimodal tasks.

How does cross-attention handle different sequence lengths?

Since the Query comes from the decoder and the Keys/Values come from the encoder, the two sequences do not need to be the same length. The dot product only requires the embedding dimensions to match, not the sequence lengths. This is what allows a short English sentence to be translated into a longer French one without any structural mismatch.

Next Steps for Implementation

If you are building a custom transformer, start by ensuring your encoder output is properly cached. Since the encoder only needs to run once per input, you can save its final states and reuse them for every decoder step to save on compute. If you're moving into multimodal territory, experiment with separate cross-attention heads for different data sources to see if the model's interpretability improves.

For those dealing with extremely long sequences where cross-attention becomes too slow, look into sparse attention patterns or linear attention variants. These techniques approximate the attention matrix to reduce complexity from quadratic to linear, allowing you to condition your model on thousands of tokens without running out of VRAM.

For those trying to implement this in PyTorch, remember that the

multi_head_attention_forwardfunction handles the cross-attention by passing the encoder output as thekeyandvalue, while the decoder's current representation serves as thequery. It's a common mistake to swap these or pass the decoder state into all three, which effectively turns your cross-attention back into self-attention.this is cool

Calling this a "bridge" is a simplistic metaphor for people who can't handle the actual linear algebra. The reality is that we're just manipulating high-dimensional manifolds and pretending there's some "magic" happening. It's not a bridge, it's a matrix multiplication. Stop romanticizing the architecture to make it palatable for the masses.

I'll be the odd one out here, but the "filing cabinet" analogy is actually a bit clunky, though admittedly charming in its own quaint way. Moreover, the author's use of "non-negotiable" is a tad hyperbolic, isn't it? While the sequence is standard, the beauty of neural networks is that you can occasionally break the rules and find some bizarre emergent property, although usually, you just end up with a model that hallucinates gibberish. Still, a delightful read for the uninitiated!

I've been spending a lot of time lately thinking about how the scaling factor $1/\sqrt{d_k}$ basically acts as a stabilizer for the whole system, and it's just so fascinating how a small mathematical tweak can prevent the entire training process from collapsing into a void of vanishing gradients. I wonder if there are other ways to normalize this that haven't been widely adopted yet, maybe something more dynamic that changes based on the layer depth, because it feels like we're just following the standard formula without really probing the boundaries of what's possible in high-dimensional space, but then again, if it ain't broke, don't fix it, right?

Oh my goodness, the way the author explains the "information bottleneck" is just absolutely heart-wrenching because it perfectly captures the tragedy of a model trying to remember a whole story from a single tiny vector! It is simply an absolute crime that early sequence-to-sequence models suffered such a fate, and I am just so beyond thrilled to see how cross-attention rescued the industry from that nightmare by allowing the decoder to gaze back at the full, glorious sequence of the encoder's hidden states in all its majesty! It's practically a cinematic redemption arc for machine translation!

I find it quaint that people still consider the basic transformer architecture "cutting edge" in this day and age. Anyone with a modicum of interest in current research knows that we've moved far beyond these rudimentary conditioning methods. The distinction between self and cross attention is essentially undergraduate material at this point, yet here we are, treating it like a revelation. It's almost cute how the post tries to simplify it for the layman.

I really appreciate how the post breaks down the multimodal aspect because it's such a huge area of growth right now, and seeing the difference between concatenation and separate layers helps me realize why some models feel more coherent than others when they're trying to describe an image. I've been trying to coach some students through the Hugging Face library and they always struggle with the concept of the encoder output being a cached memory, so having this clear explanation of the cross-attention mechanism as a dynamic map really helps bridge that gap in understanding and allows them to visualize the data flow much more effectively than just looking at a codebase.