Managing the cost of running Large Language Models (LLMs) isn’t just a technical problem-it’s a business one. If you’re using AI to power chatbots, content generation, or automated workflows, you’ve probably seen your cloud bill spike unexpectedly. That’s not a glitch. It’s a symptom of uncontrolled token usage. The good news? With the right strategy, you can cut your LLM costs by 30% to 50% without sacrificing quality. Here’s how.

How LLM Pricing Actually Works

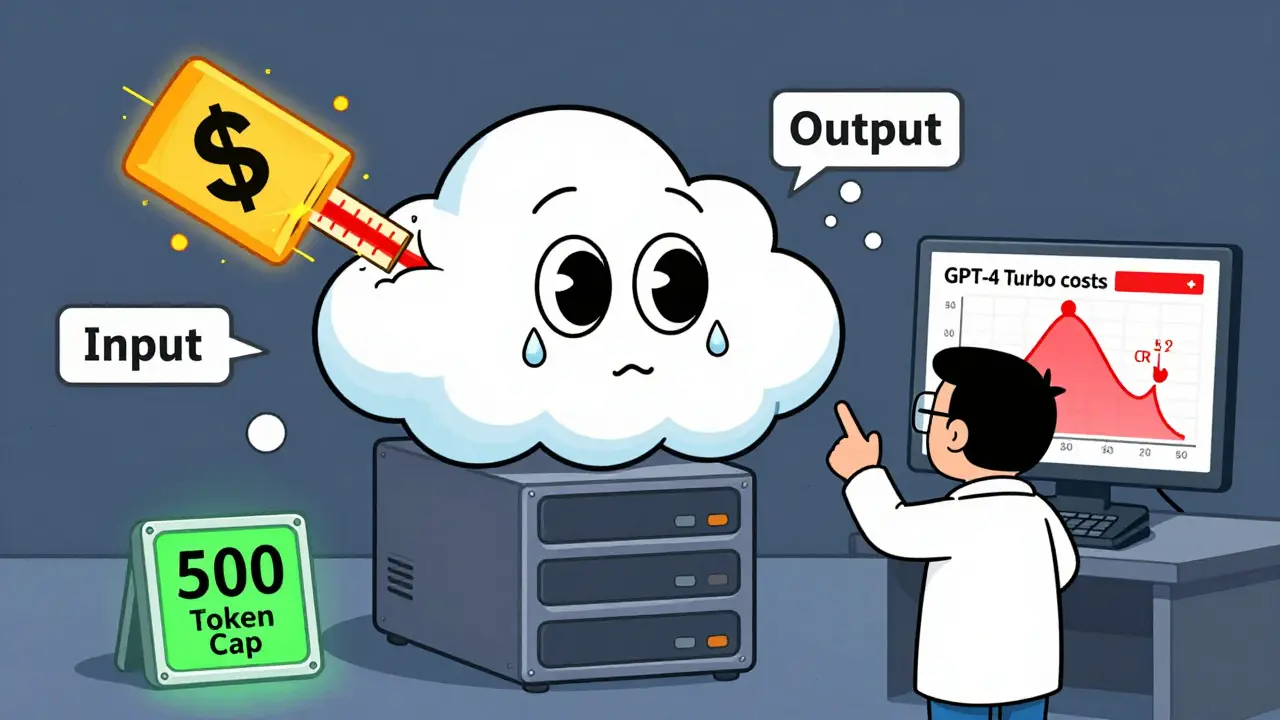

Most LLM providers don’t charge by the minute or by the user. They charge by tokens. A token is roughly a word or part of a word. In English, 1,000 tokens equal about 750 words. That means a single customer service reply might use 200 tokens for the input (your prompt) and another 300 for the output (the AI’s answer). That’s 500 tokens total.

Providers like OpenAI, Anthropic, and Google all use this model. Here’s what you’re paying right now (as of Q1 2026):

| Model | Input Cost | Output Cost | Type |

|---|---|---|---|

| GPT-3.5 Turbo | $0.50 | $1.50 | Entry |

| Gemini 1.5 Pro | $7.00 | $21.00 | Mid-tier |

| GPT-4 Turbo | $10.00 | $30.00 | Premium |

| Claude 3 Opus | $15.00 | $75.00 | Premium |

| Mixtral 8x22B | $0.60 | $1.80 | Open-source (MoE) |

Notice the gap between input and output costs? Output is almost always more expensive because generating text takes more compute than reading it. A single long-form article generation can cost 5x more than a simple question answer.

Why Token Budgets Are Your New Best Friend

Without limits, LLMs can run wild. A poorly written prompt might ask for a 10-page report when you only need a summary. The model doesn’t know you’re on a budget. It just keeps going-until it hits the max token limit or burns through your cash.

Token budgeting means setting hard limits on how many tokens each request can use. For example:

- Customer service chat: max 500 tokens total (input + output)

- Product description generator: max 200 output tokens

- Internal report summarizer: max 150 input tokens

Companies that use this approach see 15-25% lower costs just from stopping waste. Shopify merchants using Mixtral 8x7B with token caps cut their AI costs from $3.20 per customer interaction to $0.80. That’s an 80% drop.

Model Cascading: Use the Right Tool for the Job

You don’t need GPT-4 Turbo to answer "What are your hours?" You need something cheaper. That’s where model cascading comes in.

Think of it like a funnel:

- First, try a lightweight model like Claude Haiku or GPT-3.5 Turbo. It’s fast and cheap.

- If the request is complex-like analyzing financial data or writing a legal clause-then route it to GPT-4 or Claude Opus.

- Most requests (65-75%) can be handled by entry-level models without any loss in user satisfaction.

This technique is used by 70% of cost-optimized deployments, according to GetMonetizely’s 2025 case study. One healthcare startup reduced costs by 47% by routing 70% of queries to Haiku and only sending 30% to Opus. User feedback scores stayed the same.

Cache What You Can

How many times do customers ask the same question? Ten? A hundred? A thousand? Every time you answer it with a fresh LLM call, you pay again.

Caching stores the most common responses-like FAQs, product specs, or policy summaries-and serves them directly without calling the AI. This cuts costs dramatically.

A Reddit user from a healthcare startup reported a 63% cache hit rate on routine questions. That meant they paid for only 37% of their total requests. The rest were free.

Use tools like Redis or even simple in-memory stores. Set TTLs (time-to-live) to refresh cached content every few hours or days. Don’t over-cache dynamic data-but do cache the boring stuff.

Watch Out for Hidden Costs

Token costs aren’t the only thing that adds up.

- Prompt inflation: Vague prompts like "Tell me everything about our product" can use 30-50% more tokens than focused ones. Always be specific.

- Verbose error messages: If your system logs every LLM error in full, you’re paying for those tokens too. Trim logs to essentials.

- Recursive agents: Some systems have AI agents calling other AI agents. One request can spawn five. This is a budget killer. Track chain depth.

- High temperature settings: Setting temperature to 0.8 or higher makes outputs longer and more creative. Use 0.2-0.5 for consistent, shorter replies.

These aren’t edge cases. They’re everyday problems. 62% of negative reviews on LLM platforms mention "unexpected costs"-and most trace back to poor prompt design or unchecked output length.

Subscription vs. Pay-as-You-Go

Some providers now offer subscription plans:

- Anthropic’s Team plan: $25/month for 1 million tokens

- OpenAI’s Enterprise: $50,000/month for 2 billion tokens

Subscriptions work best if your usage is steady. If you’re doing 1 million tokens a month, a $25 plan beats paying $1,000 in pay-as-you-go. But if your usage spikes unpredictably-like during a product launch-you’ll waste money on unused tokens.

Hybrid models are the smartest. Use subscriptions for baseline usage, then switch to pay-as-you-go for overflow. Most enterprise teams use this approach.

Specialized Models Cost More-And That’s Okay

Not all models are created equal. BloombergGPT charges 3.2x more than general models because it’s trained on financial data. Med-PaLM 2 costs 4.7x more because it’s vetted for medical accuracy.

These aren’t overcharges. They’re premiums for reliability. If you’re analyzing SEC filings or diagnosing symptoms, you need these models. But don’t use them for routine tasks.

Keep specialized models locked to specific workflows. Don’t let marketing use Med-PaLM to write blog posts. It’s like using a Ferrari to haul groceries.

How to Start Optimizing Today

You don’t need a team of engineers to get started. Here’s your 3-step plan:

- Measure: Use your provider’s dashboard (OpenAI, Anthropic, Google) to see your current token usage. Find your top 5 most-used prompts.

- Cap: Set hard token limits on every API call. Start with 500 tokens for chat, 200 for summaries.

- Test: Run A/B tests. Compare output quality between GPT-3.5 and GPT-4 on real user queries. You might be surprised how often the cheaper model wins.

Companies that follow this approach see ROI improvements of 3.2x within 90 days, according to GetMonetizely. That’s not theory. It’s what real teams are doing.

What’s Coming Next

Cost management is evolving fast. In 2026, providers are testing:

- Cost-per-quality: Pay based on accuracy, not just tokens. Higher quality = higher cost.

- Per-action pricing: $15 per contract reviewed, $0.10 per email classified. No tokens involved.

- Hardware acceleration: Groq’s LPU can process 500 tokens per second at $0.20 per million tokens. That’s 10x cheaper than current cloud models.

But here’s the truth: the biggest gains aren’t in waiting for cheaper tech. They’re in using what’s already here-smarter.

How many tokens are in a typical chat response?

A simple answer like "Our store is open from 9 AM to 6 PM" uses about 20-30 tokens. A detailed response with examples might use 150-300 tokens. For context, a 100-word paragraph is roughly 130 tokens. Always test your prompts with a token counter tool before going live.

Can I use open-source models to save money?

Yes. Models like Mixtral 8x7B or Llama 3 70B can reduce costs by 30-50% compared to proprietary APIs, especially if you host them yourself. But you’ll need infrastructure. Tools like vLLM help optimize inference speed. Open-source isn’t free-it shifts cost from pay-per-token to hardware and maintenance.

Why is output more expensive than input?

Generating text requires more computation than reading it. The model has to predict each word step by step, checking probabilities, avoiding repetition, and maintaining coherence. Reading a prompt is just processing existing data. That’s why output tokens cost 2-5x more than input tokens across all major providers.

Should I use subscription plans or pay-per-token?

It depends on usage. If your monthly token usage is steady (e.g., 1-5 million tokens), a subscription saves money. If usage spikes unpredictably (e.g., seasonal sales, product launches), pay-per-token gives flexibility. Many teams use both: subscription for baseline, pay-per-token for overflow.

What’s the biggest mistake companies make with LLM costs?

They treat LLMs like magic boxes and don’t monitor usage. The biggest cost killer is uncontrolled prompts-vague, repetitive, or overly long. Without token limits, caching, or model routing, costs spiral. The fix isn’t more money. It’s better design.

LLM costs are going down-but only if you’re managing them. The next step? Start tracking your tokens today. Set one limit. Test one model. Cut one unnecessary call. That’s how real savings begin.

Okay but like… have you SEEN the prompts people are just… throwing at these models? I swear, half the time it’s like ‘tell me everything about our product’ and then they’re shocked when the bill hits $2k. 😭 I had a client who asked for a ‘detailed marketing strategy’ and got a 3000-token essay on the history of advertising. We capped it at 300. Saved us $12k in three weeks. Also-why is everyone still using GPT-4 for FAQs??? It’s like using a diamond-studded hammer to hang a picture. 🤦♀️

I just… I don’t understand why companies don’t just… set limits. It’s not that hard. I’ve seen teams spend more on AI than on their actual HR department. And then they wonder why they’re losing money. It’s not the model’s fault. It’s the people. Just… be responsible. Please.

Canada’s got Mixtral. USA’s still paying $30/million for GPT-4. We win.

so like… i read this whole thing and all i got was ‘set limits’ and ‘use cheaper models’… but what if your boss says ‘but we need the fanciest one’?? 🤡 i’ve been there. we did a ‘GPT-4 for everything’ phase. lasted 2 weeks. now we use haiku for emails and only call in opus when someone asks ‘is this legal?’… and even then, we charge them a coffee fund fee. 😘

Let’s be real-token budgets? Please. The real issue is that 90% of companies don’t even know what a token IS. I saw a ‘tech team’ in Toronto who thought ‘tokens’ were like loyalty points. They tried to ‘earn’ tokens by writing nice prompts. 🤦♂️ And then there’s the ‘recursive agent’ horror stories. One guy had an AI that called another AI that called a third AI that called a fourth… to answer ‘what’s our return policy?’ The final cost? $47.23. For THREE WORDS. That’s not innovation. That’s a cry for help.

Also-caching? Of course you cache. But you also need to audit it. I had a client who cached a product page from 2022. Customers kept asking ‘why is your website saying we don’t ship to Alberta?’ Turns out, the cached response said ‘we don’t ship to Canada.’ We fixed it. Saved $800/month. And now I’m banned from their Slack. Good job, team.

And don’t get me started on ‘subscription plans.’ You pay $25/month for 1M tokens? What if you use 1.1M? You’re still paying $25. But if you use 2M? Suddenly you’re paying $2000. That’s not a plan. That’s a trap. Pay-as-you-go is the only sane option unless you have a crystal ball and a time machine.

Also-why is no one talking about prompt inflation? People write prompts like they’re writing novels. ‘Tell me everything about our product, including its history, the founder’s childhood, the manufacturing process, competitor analysis, and a haiku about customer satisfaction.’ NO. JUST NO. Use bullet points. Be. Specific. It’s not hard.

And temperature? 0.8? Are you trying to write poetry or answer a customer? Use 0.3. Save tokens. Save money. Save your sanity.

This isn’t rocket science. It’s basic math. But everyone acts like it’s alchemy. We’re not wizards. We’re accountants with APIs.

Caching works. Set limits. Use Mixtral. Done.

There’s a deeper truth here that no one wants to admit: we’re not managing costs-we’re managing our fear of being replaced. We throw GPT-4 at every problem because we’re terrified that if we use something cheaper, the AI won’t be ‘smart enough.’ But the data shows otherwise. The cheapest model often performs better because it’s focused. It doesn’t hallucinate. It doesn’t over-explain. It just answers.

And yes-open-source models are the future. But not because they’re cheaper. Because they’re ours. We control them. We audit them. We don’t have to beg a Silicon Valley corporation for API access. We don’t have to pray their pricing doesn’t change tomorrow.

There’s dignity in self-reliance. And there’s power in knowing your infrastructure. Hosting Mixtral on your own server isn’t a technical challenge. It’s a philosophical stance. It says: ‘I don’t need permission to be efficient.’

But most companies? They’d rather pay $30 per million tokens than learn how to run a Linux box. That’s not a cost problem. That’s a courage problem.