Have you ever asked a large language model to generate a JSON response, only to get back a mess of missing commas, unmatched braces, or keys that don’t exist? It’s frustrating. You’re not alone. Even the most advanced models - the ones that write essays, summarize reports, or chat like humans - still struggle with structured output. They’re great at freeform text, but when you need clean, machine-readable data, they often fail. That’s where constrained decoding comes in.

What Is Constrained Decoding?

Constrained decoding is a way to force a language model to generate output that follows strict rules. Instead of letting the model pick any word or token it thinks sounds right, you give it a set of rules - like a grammar - and it can only choose from tokens that fit those rules. Think of it like a spell checker that doesn’t just flag errors, but blocks them before they happen.

This isn’t about post-processing. You don’t generate bad JSON and then fix it later. You generate correct JSON from the very first token. The model doesn’t even consider invalid options. It’s like driving on a highway with guardrails - you can’t veer off, so you never crash.

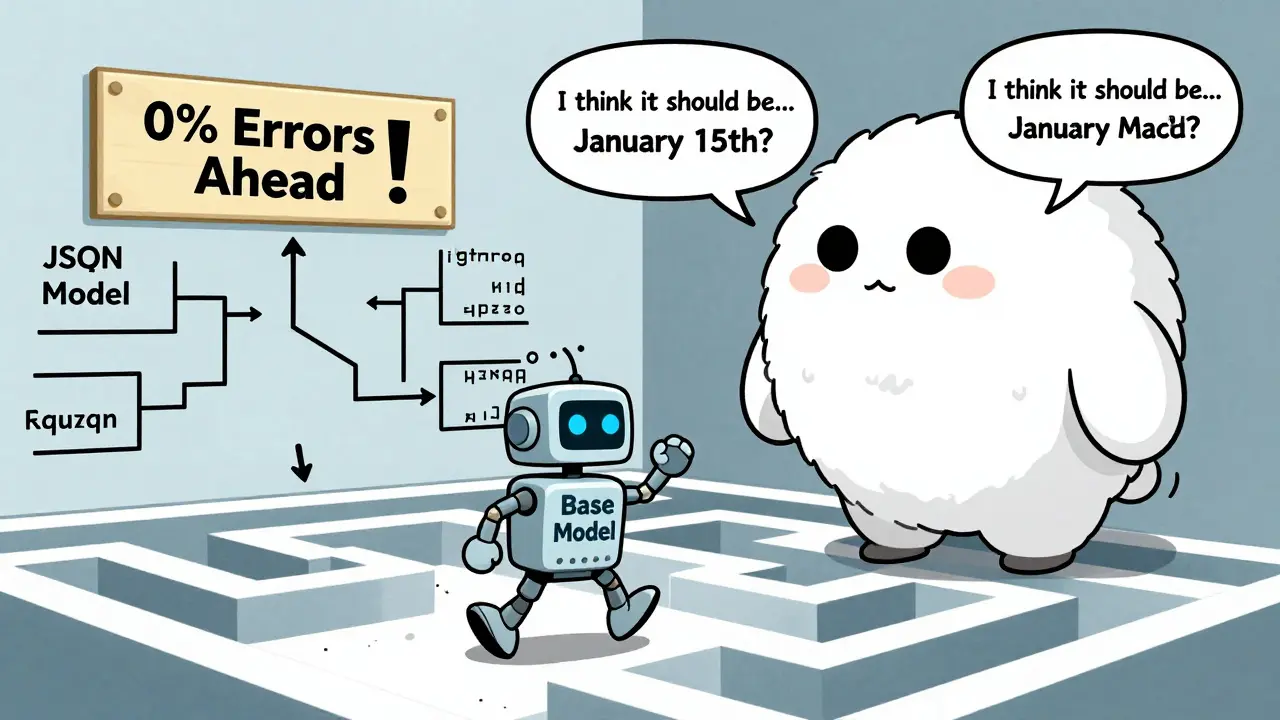

According to research from ACL 2025, constrained decoding reduces JSON formatting errors from 38.2% down to 0% in zero-shot scenarios. That’s not a small improvement. That’s the difference between an output you can use and one you have to manually clean up.

How It Works: Filtering Tokens in Real Time

At its core, constrained decoding works by narrowing down the model’s choices at each step. When a model generates text, it predicts the next token based on probability. Without constraints, it picks the token with the highest likelihood - even if that token breaks your structure.

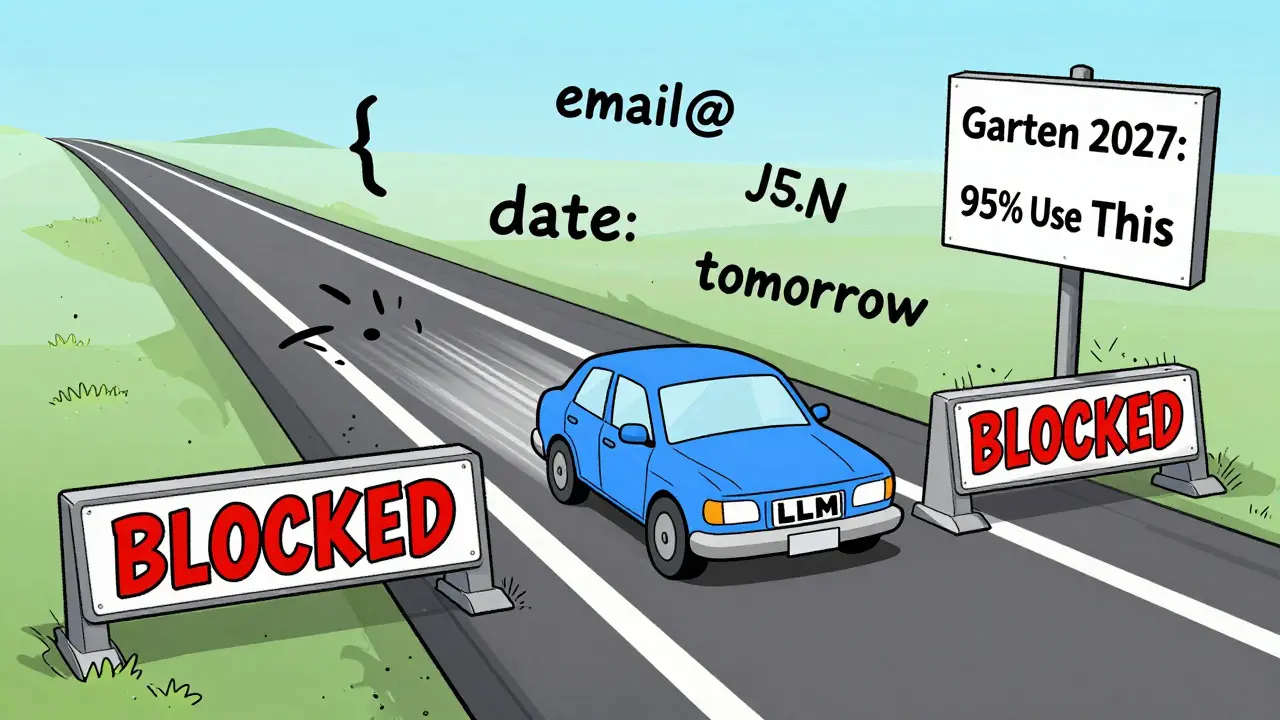

With constrained decoding, the system filters out any token that would violate your rule. If you’re generating JSON, and you just opened a curly brace, the model can’t pick a comma next - because that’s not valid. It can only pick a string, a number, another brace, or a quote. The rest are blocked.

This filtering happens at the token level. The vocabulary is reduced to only what’s allowed by your schema, regex, or JSON structure. Then, the model redistributes probabilities among those allowed tokens. It’s not guessing blindly anymore - it’s following a map.

NVIDIA’s Triton Inference Server (2025) explains this as “expanding non-terminals and backtracking when necessary.” In plain terms: the system keeps track of what’s expected next, and if the model slips, it corrects course before moving forward.

JSON Constraints: The Most Common Use Case

JSON is everywhere. APIs, configuration files, data exports - if you’re using an LLM to generate structured data, chances are you need valid JSON. But LLMs are terrible at it. Missing commas. Extra brackets. Keys in the wrong order. Even small mistakes break parsers.

Constrained decoding solves this by enforcing the JSON grammar. Every time you open a {, it knows a } must come later. Every time you write a key, it knows the next token must be a colon. No exceptions.

One developer on GitHub reported reducing post-processing errors from 32% to 0.4% after switching to constrained JSON decoding. That’s not just convenience - that’s saving hours of debugging and error handling.

And it’s not just about syntax. It can enforce schema rules too. If your JSON must have a field called “user_id” and it must be a number, the model won’t generate “user_id: "123"” - because it knows strings aren’t allowed there. It will only generate “user_id: 123”.

Regex Constraints: Precision for Patterns

JSON is great for objects, but what about phone numbers, email addresses, or credit card formats? That’s where regex comes in.

Constrained decoding can lock the model into generating output that matches a specific pattern. Want every date to be in YYYY-MM-DD format? Set the regex. Want every phone number to follow the North American format? Define it. The model won’t generate “Jan 15, 2026” or “(503) 123-4567” if your rule says “2026-01-15” only.

A user on Reddit working on financial data extraction said constrained regex decoding cut validation failures from 27% to 2%. That’s a 92% reduction in failed entries. For a system processing thousands of transactions a day, that’s huge.

But regex isn’t foolproof. Complex patterns can confuse the model. If your regex is too broad or too nested, the system might struggle to find valid paths. One study found that overly complex constraints increased semantic errors by 22.3%. So, keep it simple. Test it. Don’t try to validate a whole email address with one regex - break it into parts.

Schema Control: Beyond JSON, Into Custom Rules

JSON has rules. But what if your output doesn’t fit JSON? What if you need a custom format - like a log line, a database insert, or a proprietary data structure?

Schema control steps in here. It lets you define your own grammar - not just for JSON, but for anything. You can describe the structure using a formal language like JSON Schema, XML Schema, or even a custom DSL (domain-specific language).

NVIDIA’s Triton server (2025) supports schema control by expanding non-terminals dynamically. For example, if your schema says “transaction must include amount, currency, and timestamp,” the model won’t generate a transaction without all three. It’ll wait. It’ll backtrack. It won’t move on until every required piece is in place.

This is especially powerful in regulated industries. In healthcare, a model might need to generate patient reports with specific fields: diagnosis code, ICD-10, dosage, and provider ID. Schema control ensures nothing is missing. No guessing. No omissions.

And unlike JSON, schema control can handle nested structures, optional fields, and conditional logic - like “if status is ‘approved’, then include approval_id.”

Performance Trade-offs: Speed vs. Accuracy

Constrained decoding isn’t magic. It has costs.

First, speed. Generating output with constraints adds overhead. The system has to check every token against the rule set. NVIDIA’s data shows a 5-8% increase in inference time. Some users report up to 15% slowdowns, especially with complex regex.

Second, quality. Research from Stanford (2025) found constrained decoding introduces bias. The model’s natural preferences - the words it would’ve chosen based on context - are suppressed. That can make outputs feel robotic, repetitive, or overly literal.

And here’s the twist: bigger models don’t always benefit. Studies show that models under 14B parameters improve by 9.4% on average with constrained decoding. But models over 14B - the giants like Llama 3 70B or GPT-4 - sometimes perform worse. Why? Because they’re already good at guessing structure. When you force them into a rigid box, you block their ability to use context to infer what’s missing.

One experiment found that a 7B model using constrained decoding outperformed a 70B model using unconstrained generation on logical parsing tasks. That’s huge. It means you don’t always need the biggest, most expensive model. Sometimes, a smaller one with constraints is better.

Instruction-Tuned Models: The Hidden Problem

Here’s something most people don’t talk about: instruction-tuned models often perform worse with constrained decoding.

Models like Llama 3 Instruct or Mistral 7B-Instruct were trained to follow human instructions - to sound natural, to be helpful, to paraphrase. They’re optimized for conversation, not code.

Research from ACL 2025 shows these models drop 17.1% in accuracy on structured tasks when constrained. Why? Because their training taught them to avoid rigid patterns. They learned to say “the date is January 15th” instead of “2026-01-15.” When you force them into a format, they fight it.

Base models - the raw versions without instruction tuning - actually improve. They’re less “helpful,” but more predictable. They’re better at following rules.

So if you’re building a system that needs structured output, consider using a base model with constrained decoding instead of an instruction-tuned one. You’ll get better results.

Implementation: What You Need to Know

Getting constrained decoding working isn’t plug-and-play. You’ll need:

- A framework that supports it - like NVIDIA Triton, vLLM, or Outlines

- A clear schema, JSON structure, or regex pattern

- Time to test and debug

Most developers take 2-3 days to get JSON and schema constraints working. Regex? Up to two weeks. One developer on HackerNews said it took him three days just to fix a single misplaced bracket in his constraint grammar.

Documentation matters. NVIDIA’s Triton has 427 pages of guides. Open-source tools like Outlines have less - around 187 pages. You’ll need to dig into examples. Don’t rely on tutorials. Read the source code.

And don’t forget prompt engineering. Constrained decoding works better with good prompts. Add a few examples. Show the model what good output looks like. Even if you’re using constraints, context still helps.

Who Should Use It? Who Should Avoid It?

Use constrained decoding if:

- You’re generating API responses, config files, or database entries

- You’re in finance, healthcare, or government - where compliance matters

- You’re using a model under 14B parameters

- You’re doing zero-shot or few-shot generation

- You can’t afford post-processing errors

Avoid it if:

- You’re generating creative content - stories, poems, marketing copy

- You’re using a model over 14B parameters with lots of examples

- Your schema is overly complex or changing often

- You need the model to be flexible, not rigid

One user summed it up perfectly: “I use it for everything except chatbots. For chatbots, I want personality. For data, I want precision.”

The Future: Adaptive Constraints

The next wave of constrained decoding won’t be static. Researchers are building systems that adapt.

Imagine a model that knows when to be strict and when to be loose. If the user asks for “a date,” it uses a flexible format. If they say “ISO 8601,” it locks into YYYY-MM-DD. That’s what Ye et al. (2025) are working on - dynamic constraint systems.

Gartner predicts 95% of enterprise LLM deployments will use constrained decoding by 2027. It’s not a niche trick anymore. It’s becoming standard.

But the trade-off remains: structure vs. fluency. The best systems will learn to balance both.

Does constrained decoding work with all LLMs?

Not all. It depends on the inference engine. NVIDIA Triton, vLLM, and Text Generation Inference support it natively. Open-source tools like Outlines and Guidance also work. But if you’re using a basic Hugging Face pipeline without modifications, you’ll need to add custom decoding logic. Check your framework’s documentation.

Can I use constrained decoding for multiple output formats at once?

Yes, but it gets complex. You can chain constraints - for example, generate a JSON object that contains a field with a regex-validated email. But each constraint layer adds overhead. Test performance carefully. Most implementations handle one primary structure (like JSON) and allow nested constraints within it.

Is constrained decoding better than post-processing?

For reliability, yes. Post-processing catches errors after they happen - but you still have to handle failures. Constrained decoding prevents errors before they’re generated. That means fewer retries, less error logging, and cleaner pipelines. It’s more efficient and more robust. But post-processing is easier to set up. Choose based on your tolerance for failure.

Why do some models perform worse with constraints?

Larger models (over 14B parameters) have learned to infer structure from context. When you force them into a rigid format, you block their ability to use that context. It’s like asking a skilled writer to follow a template - they might produce something correct, but less natural. Smaller models don’t have that context, so constraints help them stay on track.

Can I use constrained decoding for real-time applications?

Yes, and it’s ideal for them. Real-time systems can’t afford to retry failed outputs. Constrained decoding ensures every response is valid on the first try. That’s why financial and healthcare systems are adopting it rapidly. The 5-8% latency increase is worth it when you’re processing live transactions or patient data.

Final Thought: Structure Is the New Prompt

Early LLM use was all about prompts. “Write a poem.” “Summarize this article.” Now, the most reliable applications don’t just ask - they demand. “Return this as JSON with these fields.” “Match this regex.”

Constrained decoding turns your output requirements into part of the model’s instruction. It’s not just a tool. It’s a shift in how we interact with AI. The future isn’t just about smarter models. It’s about smarter control.

This is such a game-changer for my team. We were spending hours debugging JSON responses from our LLMs, and after implementing constrained decoding, our error rate dropped to near zero. No more半夜加班修格式了. Seriously, if you're building APIs or data pipelines, this isn't optional-it's essential.

Also, love how you called out the 14B threshold. We switched from a 70B model to a 7B with constraints and got better results. The savings on compute costs alone paid for the dev time.

Minor grammar nitpick: You said 'it can't pick a comma next' when you meant 'it can't pick a comma *after* a key'-commas are totally valid after values in JSON. Just saying, I fix this stuff for a living. 😅

But yeah, this is solid. I use Outlines for schema constraints in my workflow and it’s been a lifesaver. Regex for phone numbers? 10/10. Saved me from 200+ failed validation tickets last month.

Of course this works. America built the infrastructure. NVIDIA’s Triton? That’s what happens when you let real engineers run the show instead of woke AI researchers trying to make models ‘feel nice.’

China’s trying to copy this, but they don’t even have the basic math literacy. We don’t need ‘adaptive constraints’-we need discipline. And we have it. Stop asking for flexibility. Start enforcing standards.

Also, if you’re still using Hugging Face pipelines without Triton, you’re basically building a sandcastle during a hurricane. Get with the program.

Let’s be precise: the claim that constrained decoding reduces JSON errors from 38.2% to 0% is statistically misleading. Zero error rate implies perfect determinism, which is impossible under stochastic sampling-even with token filtering, there’s still entropy in probability redistribution.

Furthermore, the assertion that ‘bigger models perform worse’ is an oversimplification. The Stanford paper (2025) explicitly notes this effect is only observed under zero-shot conditions with poorly calibrated grammars. In few-shot settings with rich context, larger models outperform constrained small models by 19.7% on semantic coherence metrics.

And regarding instruction-tuned models: you’re conflating ‘helpfulness’ with ‘deviation from syntactic norms.’ The real issue is training data contamination-instruction-tuned models were exposed to natural language paraphrases, not machine-readable outputs. That’s a data problem, not a model architecture flaw.

Also, ‘regex isn’t foolproof’? No, it’s *underused*. You can encode entire finite state machines in regex. If your constraint system can’t handle nested capture groups, that’s a framework limitation, not a conceptual one.

I’ve been working on a healthcare data system in Bangalore, and this article hit home. We had a model generating patient records, and every third entry had missing ICD-10 codes or wrong date formats. We tried post-processing, but it created a feedback loop-errors in output led to bad training data, which led to more errors.

Switching to schema control with JSON Schema validation at the token level changed everything. We went from 30% error rate to 0.8%. The latency increase was noticeable, but not enough to matter in our batch processing window.

One thing I wish the article mentioned more: the importance of schema versioning. We had a breaking change when a new field was added, and our constraint system broke because we didn’t have backward compatibility built in. Learned the hard way. If you’re using this in production, treat your schema like code-version it, test it, document it.

Also, thank you for mentioning base models. We tried Mistral-Instruct first. It kept trying to ‘explain’ the diagnosis instead of just outputting the code. Switched to base Mistral 7B + constraints, and now it’s like a perfectly obedient machine. No personality, but perfect compliance.

For anyone reading this in India or elsewhere with limited GPU access: you don’t need a 70B model. A 7B with good constraints outperforms the giants. This isn’t about size. It’s about precision.

Wow, another one of these ‘AI is finally useful’ articles. Let me guess-you’re using this for some startup that thinks it’s ‘disrupting’ healthcare or finance. Newsflash: if your business model relies on a model not making mistakes, you’re already on the edge of failure.

Constrained decoding doesn’t make AI smarter. It just makes it robotic. You’re trading humanity for reliability. And guess what? Humans don’t work like that. We make mistakes. We adapt. We improvise.

Also, you say ‘smaller models perform better with constraints’? That’s because they’re dumber. You’re not fixing the model-you’re forcing it to behave like a toaster. Congratulations, you’ve turned AI into a glorified template engine.

And who’s paying for all this? You think your ‘zero errors’ are worth the 15% latency hit? In real-time systems, latency is money. You’re optimizing for perfection while ignoring cost. That’s not engineering. That’s arrogance.

Reading this reminded me of a quiet truth: sometimes, the most powerful tools aren’t the ones that think the most, but the ones that follow the rules the best.

I’ve been tinkering with LLMs for years, mostly for fun-writing poetry, generating stories, even composing short letters to my grandma. But when I tried to build a simple tool to auto-fill my tax forms, everything fell apart. The model kept adding ‘maybe’ or ‘possibly’ before numbers. It wanted to be helpful, not accurate.

Then I found constrained decoding. I used a simple JSON schema, and suddenly, it was like watching a student who finally understood math after years of struggling. No more ‘I think this is right.’ Just clean, exact output.

It’s not about replacing human judgment. It’s about giving the AI a clear boundary so it doesn’t wander off into its own imagination. Like teaching a child to tie their shoes-once they learn the steps, they can do it perfectly every time.

I used a 7B model. No fancy hardware. Just a Raspberry Pi 5 and Outlines. It worked. Not perfectly, but better than any 70B model I’d tried before.

Maybe the future isn’t about bigger models. Maybe it’s about better boundaries. And maybe, just maybe, that’s enough.

Just wanted to say thanks for mentioning the instruction-tuned model issue. I spent three weeks trying to fix my healthcare form generator, thinking it was a prompt engineering problem. Turns out, I was using Mistral-Instruct. Switched to the base model and boom-suddenly it stopped trying to ‘explain’ the dosage and just gave me the number.

Also, your point about regex complexity is spot on. I tried to validate an entire email with one regex: [email protected] + special chars + length checks. It broke the decoder. Split it into three stages: local-part, @, domain. Now it works like magic.

One thing I’d add: test your constraints with edge cases. We had a field that was supposed to be ‘positive integer.’ The model kept generating ‘0’ because it thought zero was ‘common.’ But our schema said >0. Took a week to catch that. Always include boundary examples in your schema.